Hi, All.

To make experiments more convience, I’ve tried to write a Dataset that can be feeded to dataloader.

However, my dataset can read data correctly while the dataloader distorted the images as follows.

Images in my dataset is 90001616. (gray images)

I want to find out how does dataloader transform the dataset into 9000116*16, but I can’t find the code.

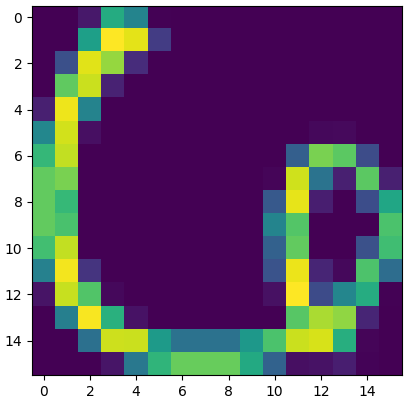

Image in my dataset:

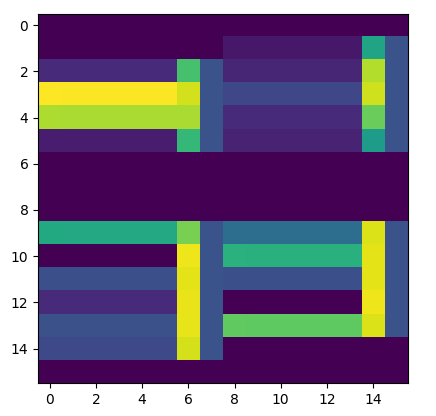

Image in dataloader:

Here is my code:

# training set

transform = transforms.Compose([transforms.Scale(28), transforms.ToTensor(), ])

trainset = USPS(root='./data', train=True, download=False, transform=transform)

# split data

trainloader = torch.utils.data.DataLoader(trainset, batch_size=100, shuffle=False, num_workers=1)

# kernel code in USPS

def load(self):

# process and save as torch files

print('Processing...')

data = sio.loadmat(os.path.join(self.root,'usps_train.mat'))

traindata = torch.from_numpy(data['data'].transpose())

traindata = traindata.view(16,16,-1).permute(2,1,0)

trainlabel = torch.from_numpy(data['labels'])

data = sio.loadmat(os.path.join(self.root,'usps_test.mat'))

testdata = torch.from_numpy(data['data'].transpose())

testdata = testdata.view(16,16,-1).permute(2,1,0)

testlabel = torch.from_numpy(data['labels'])

training_set = (traindata, trainlabel)

test_set = (testdata, testlabel)

with open(os.path.join(self.root, self.training_file), 'wb') as f:

torch.save(training_set, f)

with open(os.path.join(self.root, self.test_file), 'wb') as f:

torch.save(test_set, f)

print('Done!')