Hello everyone,

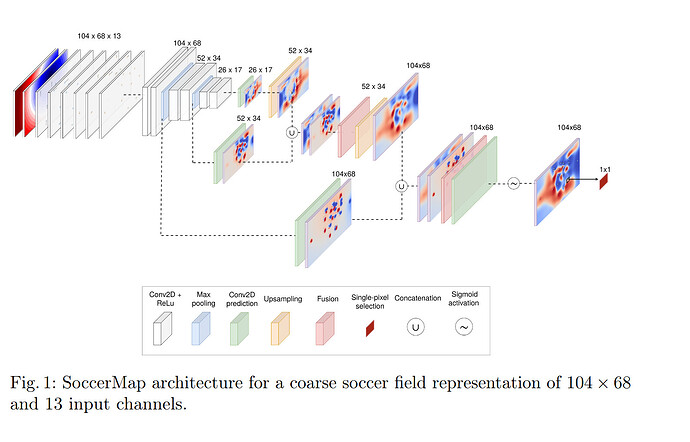

I am working on a deep learning project using PyTorch to build a SoccerMap architecture which calculate success surface of ass in Football. I saw this concept in this paper

The model involves multiple convolutional, pooling, and upsampling layers. However, I am encountering a RuntimeError during the forward pass related to the dimensions of the concatenated tensors.

Here is the model code:

import torch

import torch.nn as nn

import torch.nn.functional as F

from torchsummaryX import summary

class SoccerMap(nn.Module):

def __init__(self, input_channels=11):

super(SoccerMap, self).__init__()

# 1x scale layers

self.conv1x_1 = nn.Conv2d(input_channels, 64, kernel_size=5, stride=1, padding=2)

self.conv1x_2 = nn.Conv2d(64, 64, kernel_size=5, stride=1, padding=2)

self.pool1 = nn.MaxPool2d(kernel_size=2, stride=2)

# 1/2x scale layers

self.conv1_2x_1 = nn.Conv2d(64, 128, kernel_size=5, stride=1, padding=2)

self.conv1_2x_2 = nn.Conv2d(128, 128, kernel_size=5, stride=1, padding=2)

self.pool2 = nn.MaxPool2d(kernel_size=2, stride=2)

# 1/4x scale layers

self.conv1_4x_1 = nn.Conv2d(128, 256, kernel_size=5, stride=1, padding=2)

self.conv1_4x_2 = nn.Conv2d(256, 128, kernel_size=5, stride=1, padding=2)

# 1x1 convolution for reducing channels

self.conv4 = nn.Conv2d(in_channels=256, out_channels=64, kernel_size=1)

# Upsample layers with additional convolutions

self.upsample1 = nn.Upsample(scale_factor=2, mode='nearest')

self.upsample_conv1_1 = nn.Conv2d(in_channels=160, out_channels=32, kernel_size=3, padding=1)

self.upsample_conv1_2 = nn.Conv2d(in_channels=32, out_channels=32, kernel_size=3, padding=1)

self.upsample2 = nn.Upsample(scale_factor=2, mode='nearest')

self.upsample_conv2_1 = nn.Conv2d(in_channels=96, out_channels=32, kernel_size=3, padding=1)

self.upsample_conv2_2 = nn.Conv2d(in_channels=32, out_channels=1, kernel_size=3, padding=1)

self.pred_conv1 = nn.Conv2d(in_channels=128, out_channels=32, kernel_size=1)

self.pred_conv2 = nn.Conv2d(in_channels=32, out_channels=1, kernel_size=1)

def forward(self, x):

# 1x scale

x1 = F.relu(self.conv1x_1(x))

x1 = F.relu(self.conv1x_2(x1))

p1 = self.pool1(x1)

# 1/2x scale

x2 = F.relu(self.conv1_2x_1(p1))

x2 = F.relu(self.conv1_2x_2(x2))

p2 = self.pool2(x2)

# 1/4x scale

x3 = F.relu(self.conv1_4x_1(p2))

x3 = F.relu(self.conv1_4x_2(x3))

# Prediction at 1/4x scale

pred1 = F.relu(self.pred_conv1(x3))

pred1 = self.pred_conv2(pred1) # Linear activation

pred2 = F.relu(self.pred_conv1(x2))

pred2 = self.pred_conv2(pred2) # Linear activation

# Upsample and concatenate

u1 = self.upsample1(pred1)

cat1 = torch.cat([u1, pred2], dim=1)

x4 = F.relu(self.upsample_conv1_1(cat1))

x4 = self.upsample_conv1_2(x4) # Linear activation

u2 = self.upsample2(x4)

pred3 = F.relu(self.pred_conv1(x1))

pred3 = self.pred_conv2(pred3) # Linear activation

cat2 = torch.cat([u2, pred3], dim=1)

x5 = F.relu(self.upsample_conv2_1(cat2))

x5 = self.upsample_conv2_2(x5) # Linear activation

pred4 = F.relu(self.pred_conv1(x5))

pred4 = self.pred_conv2(pred4)

return pred4

if __name__ == "__main__":

input_size = 11

model = SoccerMap(input_size)

summary(model, torch.zeros((1, input_size, 80, 120)))

And an error occures.

RuntimeError: Given groups=1, weight of size [32, 160, 3, 3], expected input[1, 2, 40, 60] to have 160 channels, but got 2 channels instead

I suspect the issue might be related to the channel dimensions during concatenation, but I am not entirely sure how to resolve it. Any guidance or suggestions would be greatly appreciated.

Also this is the model archtecture what i want to implement.

Thank you in advance for your help!