We plan to use Visual Studio 2019 to load a model that has already been trained in PyTorch with Script conversion into C++ with CUDA 11.3 and LibTorch 1.11 for class classification. Input image is a black and white image, input size is 224x224. Therefore, the tensor size is {1,1,224,224}.

Here is the current code, and as an example, we are currently inputting a sample tensor with no image input.

#include <torch/torch.h>

#include <iostream>

#include <torch/script.h>

#include <opencv2/opencv.hpp>

int main() {

// Load the model

std::string model_path = "model_weights_c++.pt";

torch::jit::script::Module module;

try {

module = torch::jit::load(model_path);

}

catch (const c10::Error& e) {

std::cerr << "Error loading the model: " << e.what() << std::endl;

return -1;

}

// Set the device to GPU if available

torch::Device device(torch::kCPU);

if (torch::cuda::is_available()) {

device = torch::Device(torch::kCUDA); }

}

// Create a vector of inputs.

std::vector<torch::jit::IValue> inputs;

inputs.push_back(torch::ones({ 1, 1, 224, 224 }));

module.to(device);

// Execute the model and turn its output into a tensor.

std::vector<torch::jit::IValue> outputs;

at::Tensor output = module.forward(inputs).toTensor().to(device);

std::cout << output.slice(/*dim=*/1, /*start=*/0, /*end=*/5) << '\n';

return 0;

}

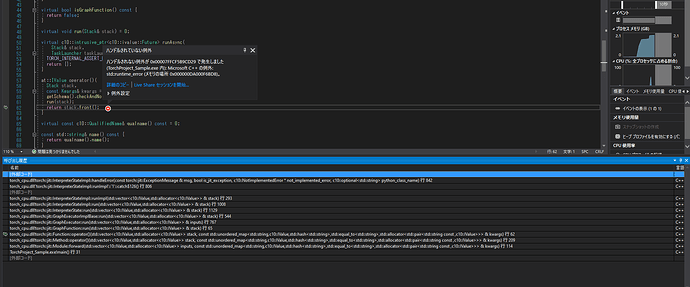

The build succeeds, but while executing module.forward(), the following program stops the effect with an unhandled exception. [return stack.front()].

What is the reason? Please help.

at::IValue operator()(

Stack stack, kwargs& kwargs& kwargs

const Kwargs& kwargs = Kwargs()) {

getSchema().checkAndNormalizeInputs(stack, kwargs);

run(stack);

return stack.front(); } <-------------here

}

The call history is shown in the following image.