I am trying to compute a gradient of y_hat to x (y_hat is the sum of gradients of model output to x) but it gives me the error: One of the differentiated Tensors appears to not have been used in the graph. This is the code:

class Model(nn.Module):

def __init__(self,):

super(Model, self).__init__()

self.weight1 = torch.nn.Parameter(torch.tensor([[.2,.5,.9],[1.0,.3,.5],[.3,.2,.7]]))

self.weight2 = torch.nn.Parameter(torch.tensor([2.0,1.0,.4]))

def forward(self, x):

out =F.linear(x, self.weight1.T)

out =F.linear(out, self.weight2.T)

return out

model = Model()

x = torch.tensor([[0.1,0.7,0.2]])

x = x.requires_grad_()

output = model(x)

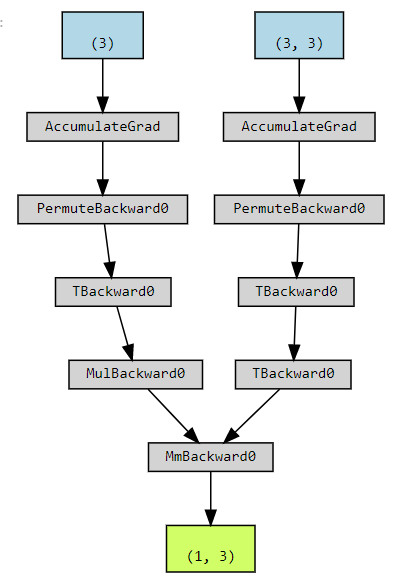

y_hat = torch.sum(torch.autograd.grad(output, x, create_graph = True)[0])

torch.autograd.grad(y_hat, x)

I think x should be in the computational graph, so I don’t know why it gives me this error? Any thoughts would be appreciated!