This is my NN :

class torchNET(torch.nn.Module):

def __init__(self, D_in, H1, H2, H3, D_out):

"""

In the constructor we instantiate four nn.Linear modules and assign them as

member variables.

"""

super(torchNET, self).__init__()

self.linear1 = torch.nn.Linear(D_in, H1)

self.linear2 = torch.nn.Linear(H1, H2)

self.linear3 = torch.nn.Linear(H2, H3)

self.linear4 = torch.nn.Linear(H3, D_out)

def forward(self, x):

"""

In the forward function we accept a Tensor of input data and we must return

a Tensor of output data. We can use Modules defined in the constructor as

well as arbitrary operators on Tensors.

"""

r1 = torch.nn.ReLU()

t1 = torch.nn.Tanh()

g1 = torch.nn.GLU

m1 = torch.nn.Dropout(p=0.1)

m2 = torch.nn.Dropout(p=0.1)

m3 = torch.nn.Dropout(p=0.1)

h1 = m1(t1(self.linear1(x)))#.clamp(min=0)))

h2 = m2(t1(self.linear2(h1)))

h3 = m3(t1(self.linear3(h2)))

y_pred = self.linear4(h3)

return y_pred

This is my evaluation loop :

losss_tn = []

losss_tt = []

losss_vl = []

model = torchNET(32, H1, H2, H3, D_out)

criterion = torch.nn.MSELoss(reduction='sum')

optimizer = torch.optim.Adam(model.parameters(), lr=1e-3)

# print("It has started!")

# print("Epoch Training Testing Validation")

# print("--------------------------------------------------------")

s = np.arange(10,15,1)

print("It has started!")

for num in s:

print(num)

print("Epoch Training Testing Validation")

print("--------------------------------------------------------")

#for 60 20 20 splitting

X_train, X_test, y_train, y_test = train_test_split(x, y, test_size=0.2, random_state=num)

X_train, X_val, y_train, y_val = train_test_split(X_train, y_train, test_size=0.25, random_state=num)

for t in range(5000):

optimizer.zero_grad()

# Forward pass: Compute predicted y by passing x to the model

y_pred = model(X_train)

r2 = r2_score(y_train.detach().numpy(),y_pred.detach().numpy())

loss_tn = criterion(y_pred, y_train)

losss_tn.append(loss_tn.item())

loss_tn.backward()

optimizer.step()

#setting model to prediction/evaluation mode

mod_chk = model.eval()

#making predictions on the test dataset 20%

y_pred_tt = mod_chk(X_test)

r2_test = r2_score(y_test.detach().numpy(),y_pred_tt.detach().numpy())

loss_tt = criterion(y_pred_tt, y_test)

losss_tt.append(loss_tt.item())

#making predictions on the validation dataset 20%

y_pred_vl = mod_chk(X_val)

r2_vali = r2_score(y_val.detach().numpy(),y_pred_vl.detach().numpy())

loss_vl = criterion(y_pred_vl, y_val)

losss_vl.append(loss_vl.item())

if t % 1000 == 999:

# print("Epoch = ",t)

# print("Epoch Losses: Training Testing Validation")

# print("--------------------------------------------------------")

if t==999:

print("%d Losses: %0.3f %0.3f %0.3f "%(t,loss_tn.item(),loss_tt.item(),loss_vl.item()))

print(" R2_sc : %0.3f %0.3f %0.3f "%(r2,r2_test,r2_vali))

else:

print("%d Losses: %0.3f %0.3f %0.3f "%(t,loss_tn.item(),loss_tt.item(),loss_vl.item()))

print(" R2_sc : %0.3f %0.3f %0.3f "%(r2,r2_test,r2_vali))

# Zero gradients, perform a backward pass, and update the weights.

# optimizer.zero_grad()

# loss_tn.backward()

# loss_tt.backward()

# loss_vl.backward()

# optimizer.step()

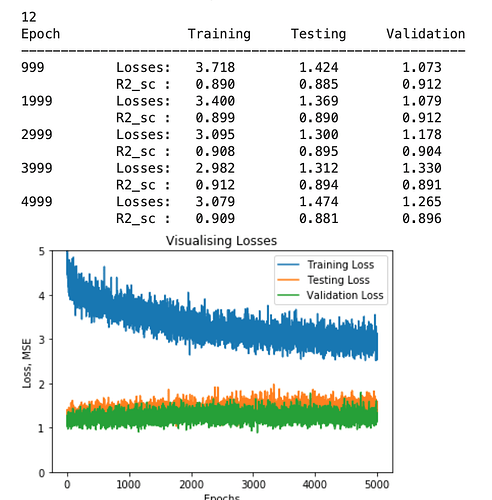

plt.plot(losss_tn,label='Training Loss')

plt.plot(losss_tt,label='Testing Loss')

plt.plot(losss_vl,label='Validation Loss')

plt.ylim([0,5])

plt.xlabel('Epochs')

plt.ylabel('Loss, MSE')

plt.title('Visualising Losses')

plt.legend(loc='upper right')

plt.show()

losss_tn = []

losss_tt = []

losss_vl = []

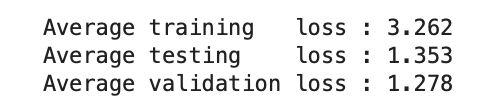

This is the snippet of the output :

My issue is my training loss is higher than testing and validation loss. Let me know where I am making the mistake.