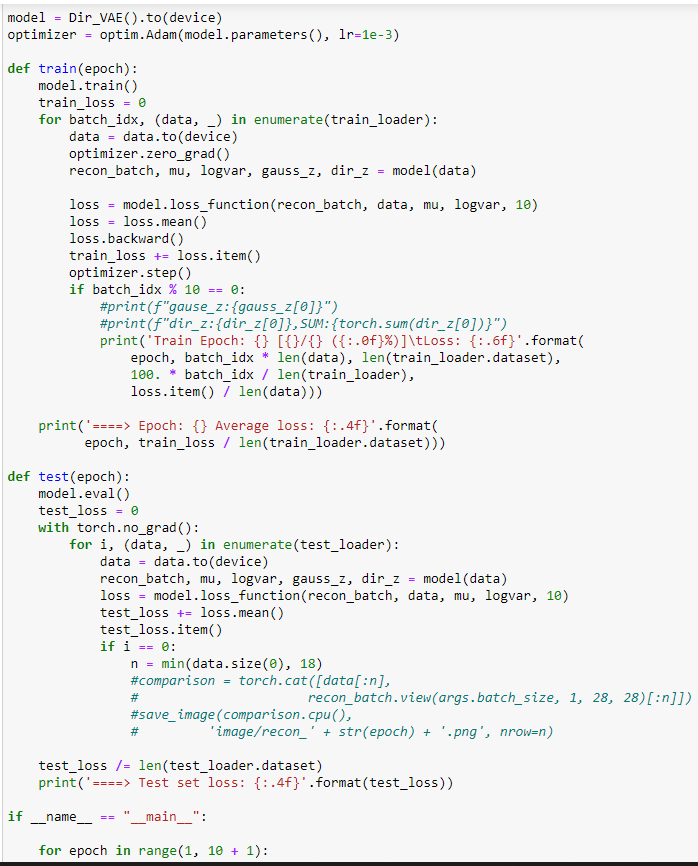

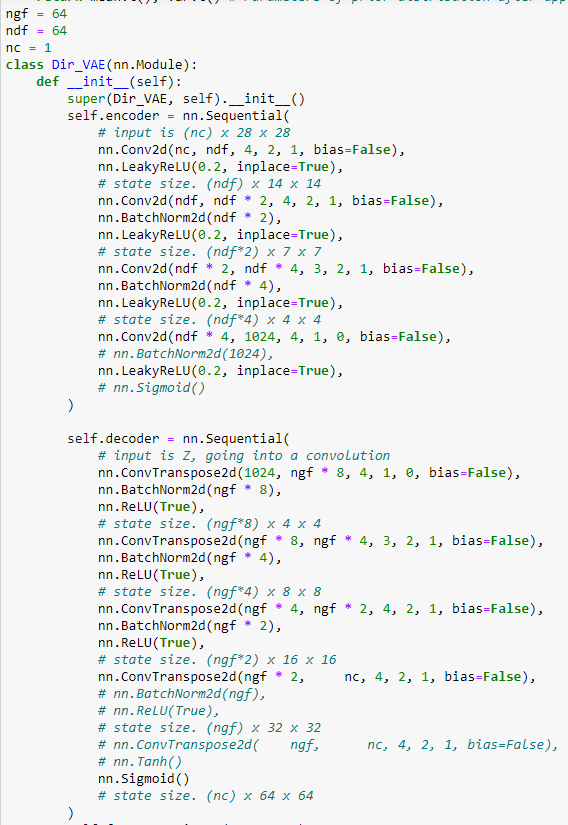

I got this error from the above code, Kindly assist :Expected 4-dimensional input for 4-dimensional weight [64, 1, 4, 4], but got 3-dimensional input of size [3, 256, 256] instead

Your input seems to have 3-dimensions.

If you’re using nn.Conv2d, the input should have 4-dimensions.

Try do it

data = data.unsqueeze(0)

Before the model inference

Thanks for assistance, After adding the above line, it gave me the following error

RuntimeError: Given groups=1, weight of size [64, 1, 4, 4], expected input[1, 3, 256, 256] to have 1 channels, but got 3 channels instead

The network is designed to train a gray scale image which has 1-channel while a RGB image has 3-channels.

In short, change the number of channels of the first convolution by

nc=3

Thanks a lot, it solved the issue

Please check the reply as a solution to let others know this is solved ![]()