Hi,

while using libtorch 1.71 to resize feature, I found that F::interpolate would get the wrong result which is different with pytorch 1.71.

Here is my libtorch code.

cv::Mat image = cv::imread("/home/lll/Pictures/test.jpg");

torch::Tensor image_tensor = torch::from_blob(image.data, {image.rows, image.cols, 3}, torch::kByte);

image_tensor = image_tensor.permute({2, 0, 1}).toType(torch::kFloat).div_(255);

image_tensor.sub_(0.5).div_(0.5);

image_tensor = image_tensor.unsqueeze(0);

image_tensor = image_tensor.to(torch::kCUDA);

namespace F = torch::nn::functional;

image_tensor = F::interpolate(

image_tensor,

F::InterpolateFuncOptions()

.mode(torch::kBilinear)

.size(std::vector<int64_t>({256, 256}))

.align_corners(true)

);

image_tensor = image_tensor.mul(0.5).add(0.5).mul(255);

image_tensor = image_tensor.squeeze(0).permute({1, 2, 0}).toType(torch::kByte).to(torch::kCPU);

cv::Mat test_mat(256, 256, CV_8UC3);

std::memcpy((void *) test_mat.data, image_tensor.data_ptr(), sizeof(torch::kU8) * image_tensor.numel());

cv::imshow("test", test_mat);

cv::waitKey(0);

Here is my pytorch code.

_transforms = transforms.Compose([

transforms.Normalize((0.5, 0.5, 0.5), (128 / 255., 128 / 255., 128 / 255.))])

image = cv2.imread("/home/lll/Pictures/test.jpg")

image = torch.from_numpy(image)

image = image.permute(2, 0, 1)

image = image.unsqueeze(0)

image = image.float()

image = _transforms(image)

image = F.interpolate(image, size=(256, 256), mode='bilinear', align_corners=True)

image = image.squeeze(0).permute(1, 2, 0)

image = image * 0.5 + 0.5

image = image.byte()

cv2.imshow("test", image.numpy())

cv2.waitKey(0)

I using opencv to visualize the result.

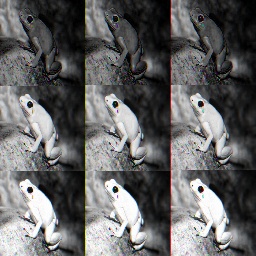

This is the original image

and this is the worng result.It seems to split the channels and merge them into a single image

This is the correct result witch I want

Strangely enough, when I use Libtorch 1.4.0 with the same code,I could get the right results.

If you could help me I will appreciate it.