Right, I should clarify.

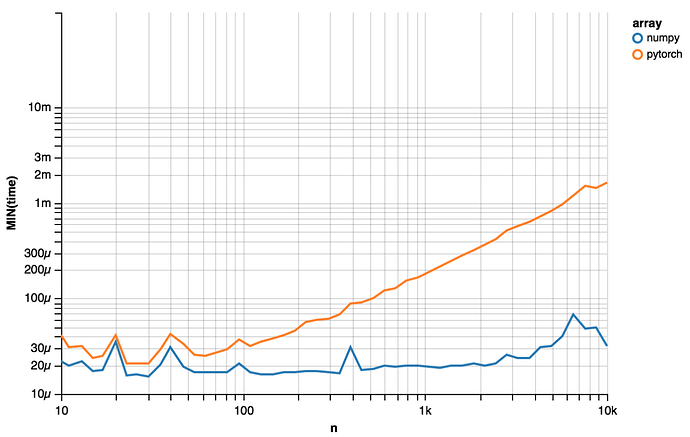

I’m comparing with NumPy serialization. The picture below times serialization for NumPy and PyTorch with pickle.dumps on a Macbook Pro 2015.

The core of my code was

def stat(x, serialize=pickle.dumps):

start = time.time()

msg = serialize(x)

return {'time': time.time() - start, 'bytes': len(msg)}

# ... other functions, for-loops, etc

x = np.random.randn(n).astype('float32')

y = torch.Tensor(x)

This is with torch.__version__ == 0.3.0.post4.