Hi!

I have trained a model on MonuSeg-dataset (20k unique cells and from 30 unique images) which I transformed with min/maxing to bounding boxes.

which I train

def create_model(pretrained=False, num_classes=2):

"""Creates faster rcnn 50 model

Args:

pretrained (bool): To use Coco pretrained

num_classes (int): Number of output classes

"""

return tv.models.detection.fasterrcnn_resnet50_fpn(

pretrained=pretrained,

num_classes=num_classes,

pretrained_backbone=True

<more code>

def train():

# Load data

dataset = CellDataset()

dataloader = DataLoader(

dataset=dataset,

batch_size=self.BATCH_SIZE,

shuffle=True,

pin_memory=True,

num_workers=self.NUM_WORKERS,

collate_fn=collate

)

# Create model

model = self.create_model(

pretrained=pretrained

)

model = model.to(self.device)

model.train()

# Pass parametres which need optimizing to the optimizer

params = [p for p in model.parameters() if p.requires_grad]

optimizer = torch.optim.SGD(

params, lr=learning_rate, momentum=.9, weight_decay=5e-4)

start = time.time()

# Main train loop

for epoch in range(self.epochs):

# Reset loss

epoch_loss = .0

# Iterate over the dataloader

for img, targets in dataloader:

img = [item.to(self.device) for item in img]

for target in targets:

for key in target:

target[key] = target[key].to(self.device)

# Evaluate

output = model(img, list(targets))

# Sum losses of the output

# Contains 4 types of loss

# loss_classifier

# loss_box_reg

# loss_objectiveness

# loss_rpn_box_reg

losses = sum(output.values())

# Backpropagate

optimizer.zero_grad()

losses.backward()

optimizer.step()

epoch_loss += losses.item()

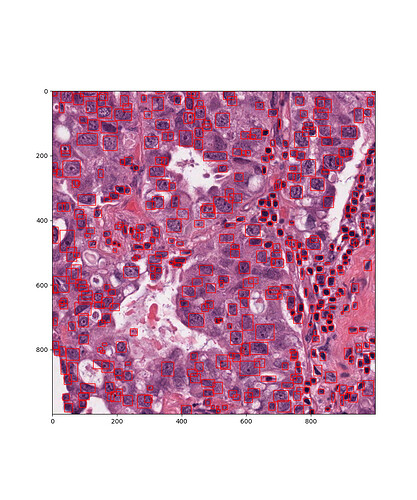

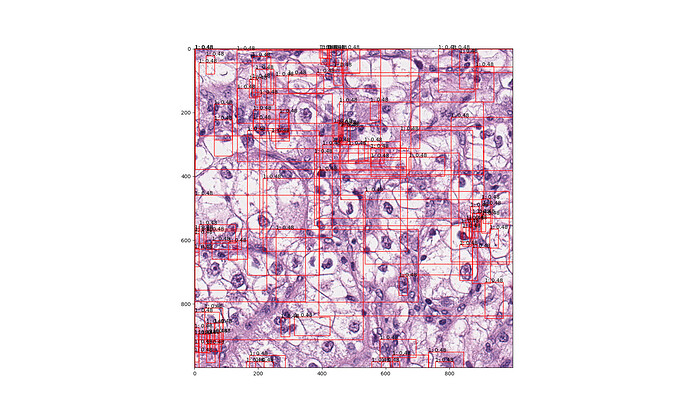

Result from unrelated image from TCGA

But all the results seem complete garbage and the prediction is always 100 boxes (this is odd) and score nearly identical for all boxes. I would have assumed at least some human readable results with varying amounts detected with unique scores. How to increase the maximum amount of matches detected?