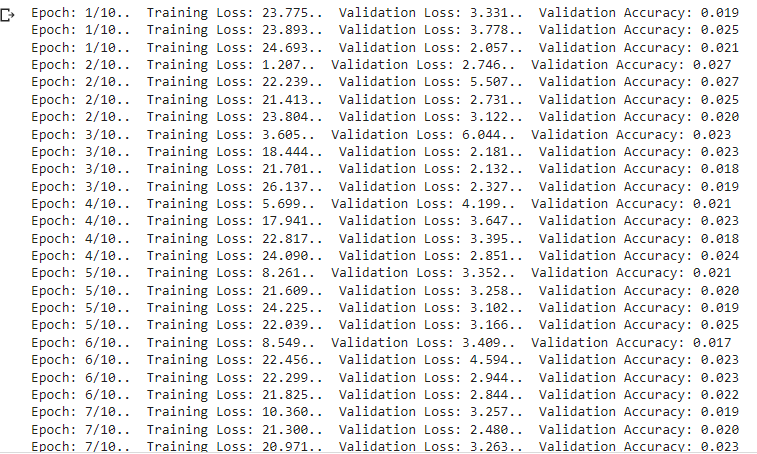

So, I want to use the pretrained models to feature extract features from images, so I used “resnet50 , incepton_v3, Xception, inception_resnet” models, removed the classifier or FC depends on the model architecture, as some models have model.fc and other have model.classifier and other have model.classi , then I concatenated the features and trained the concatenated features in a deep neural network, but I got validation accuracy 1% only, although when I did the same network in Tensorflow I got accuracy of 95%, so can someone clarify what I did wrong in my pytorch notebook?

model_resnet = models.resnet50(pretrained=True).cuda()

model_resnet.fc=nn.Sequential()

model_incep = models.inception_v3 (pretrained=True).cuda()

model_incep.fc=nn.Sequential()

model_xcep = timm.create_model(‘xception’, pretrained=True).cuda()

model_xcep.fc=nn.Sequential()

model_incep_res = timm.create_model(‘ens_adv_inception_resnet_v2’, pretrained=True).cuda()

model_incep_res.classif=nn.Sequential()

def features_extract(loader, model,features):

num_correct = 0

num_samples = 0

model.eval()

i=0

batchsize=64

score=torch.empty((10222,features))

with torch.no_grad():

for x, y in iter(loader):

x = x.to(device=device)

y = y.to(device=device)

scores = model(x)

score[i*64:(i+1)*64]=scores

i+=1

model.train()

return score

score_res=features_extract(trainloader,model_resnet,2048)

score_incep=features_extract(trainloader,model_incep,2048)

score_incep_res=features_extract(trainloader,model_incep_res,1536)

score_xcep=features_extract(trainloader,model_xcep,2048)

features_extracted=torch.cat([score_res,score_incep,score_incep_res,score_xcep],axis=1)

from collections import OrderedDict

my_model=nn.Sequential(OrderedDict([(‘fc1’, nn.Linear(7680, 5000)),

(‘drop’, nn.Dropout(p=0.5)),

(‘fc2’, nn.Linear(5000, 120)),

(‘output’, nn.Softmax(dim=0))])).cuda()

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(my_model.parameters(), 0.001 )

from tqdm import tqdm

epochs = 5

check_every = 1

iters = 0

train_loss=0

modle.to(device)

from tqdm import tqdm

epochs = 5

check_every = 1

iters = 0

train_loss=0

modle.to(device)

for epoch in range(epochs):

for i in range(features_extracted.shape[0]):

# Get data to cuda if possible

data = features_extracted[i].to(device=device)

targets = y_train[i].to(device=device)

# forward

scores = modle(torch.reshape(data,(-1,7680)))

scores=max(scores)

loss = criterion(scores, targets)

# backward

optimizer.zero_grad()

loss.backward()

# gradient descent or adam step

optimizer.step()

def check_accuracy(features_extracted, model,y_train):

num_correct = 0

num_samples = 0

model.eval()

with torch.no_grad():

for i in range(features_extracted.shape[0]):

# Get data to cuda if possible

data = features_extracted[i].to(device=device)

targets = y_train[i].to(device=device)

global scores

scores = model(data)

predictions = torch.argmax(scores)

if(predictions==y_train[i]):

num_correct+=1

model.train()

return num_correct/features_extracted.shape[0]

print(f"Accuracy on Validation set: {check_accuracy(features_val, modle,y_val):.2f}")