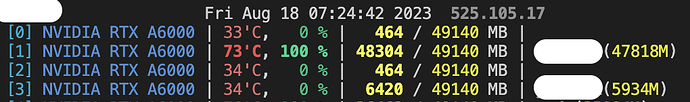

I am using torch13.0+cu117 and have 4 A6000 GPUs.

When I tried to run a same code on several GPUs with different configs at the same time, the GPU utilization of each node drops (sometimes 0% and the training is paused for several minutes) and the it takes 2 to 4 times longer than using a single node. (See the node number 3, where it uses GPU memory but GPU is not working)

Note that I am not using multiprocessing, just running the same code on different nodes for different experiments.

I checked the CPU usage using “htop” command but it seems that it does not have too much burden.

I don’t know how can I check where is the problem.

Could you help me what can I try to fix this problem?