Hello everyone,

I just started to dig into Explainable ML/DNN field and was trying to play with “captum” a bit when I found a problem that I cannot explain.

N.B.

Since I can’t upload more than image and share more than 2 links (D:), ill share 2 images by links.

I’ve a custom model to perform classification on MNIST (it reaches 97% accuracy and since I use it as a playground im not interested to improve it) with this architecture:

MnistNet(

(FeatureExtractor): Sequential(

(0): Conv2d(1, 8, kernel_size=(2, 2), stride=(1, 1), padding=(1, 1))

(1): ReLU()

(2): Conv2d(8, 16, kernel_size=(2, 2), stride=(2, 2), padding=(1, 1))

(3): ReLU()

(4): Conv2d(16, 20, kernel_size=(2, 2), stride=(1, 1), padding=(1, 1))

(5): ReLU()

(6): Conv2d(20, 24, kernel_size=(2, 2), stride=(2, 2), padding=(1, 1))

(7): ReLU()

)

(Classifier): Sequential(

(0): Flatten()

(1): Linear(in_features=1944, out_features=256, bias=True)

(2): Dropout(p=0.3, inplace=False)

(3): ReLU()

(4): Linear(in_features=256, out_features=64, bias=True)

(5): Dropout(p=0.3, inplace=False)

(6): ReLU()

(7): Linear(in_features=64, out_features=10, bias=True)

)

)

Now I’m trying to perform GuidedGradCam against an image of a four which is rightfully classified with 0.999 probability.

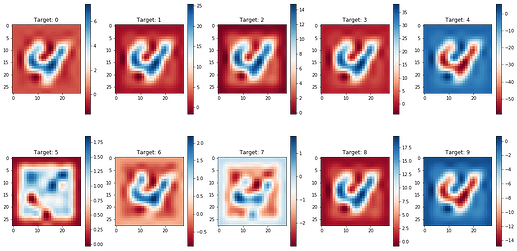

After I perform a “run” of Guided GradCam using every possible target (0-9) i get these results:

Results of GuidedGradCam

Now, even without considering the poor general results, the real problem is the image for the target 4 (the true label and the real model output), in fact it’s “blank”!!

I interpreted this result as something caused by the final ReLU pass in the GradCam so to test it, i implemented my own version of GradCam (not GuidedGradCam!) and this is the result with the same input:

Result of custom GradCam

Not how the results are similar (target 4 and 9 still blank) with expected differences (its GradCam without matmul from DeepLift, so a “less resolution” is expected as it has written in the paper).

Finally i test what happens if i take out the ReLU from my own version of GradCam. In other words i modify the last line of code such that the return now is:

gradcam = torch.sum(A_k*alpha_k, dim=(0))

and not

gradcam = nn.ReLU()(torch.sum(A_k*alpha_k, dim=(0)))

Now target 4 and 9 have very “important” outputs with really low values (up to -50 and -14). It seems that the output of GradCam should be considered in its absolute value without performing ReLU.

Question

Given the above context and results, has anyone a good explanations of why this happens and why using ReLU make sense in the original GradCam?