Hi everyone,

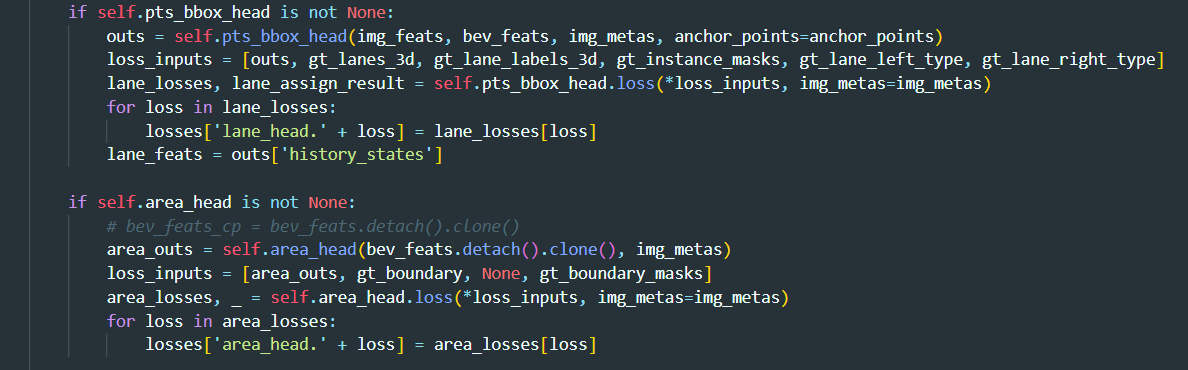

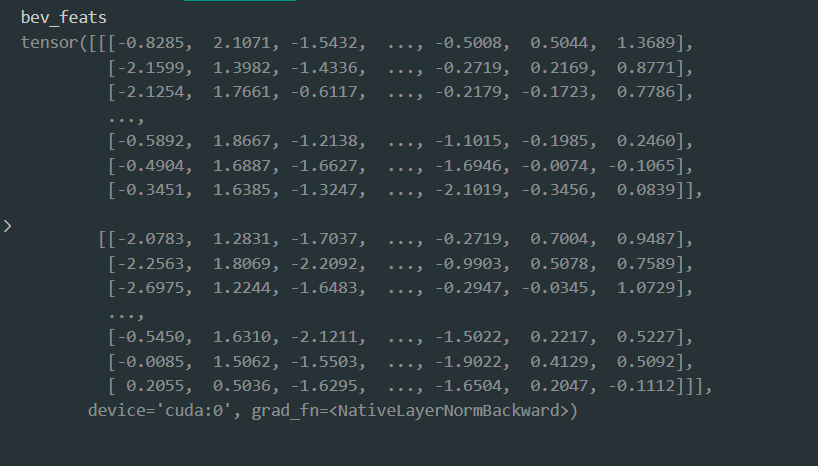

I’ve noticed an interesting behavior in my model. It utilizes the mmcv framework for both training and testing. The model features a backbone for creating BEV (Bird’s Eye View) features and two heads, pts_bbox_head and area_head, for predictions. These heads share the BEV features from the backbone. However, when I detach and clone the BEV features for forwarding via area_head, I observe that the features still get updated differently compared to completely removing the area_head. Could you explain why that’s the case? Thanks

-

With area_head:

-

Without area_head: