Hi,

I have 6161 images of handwritten text lines. I am using batch size of 20 images. I am doing augmentation on every batch on the fly.

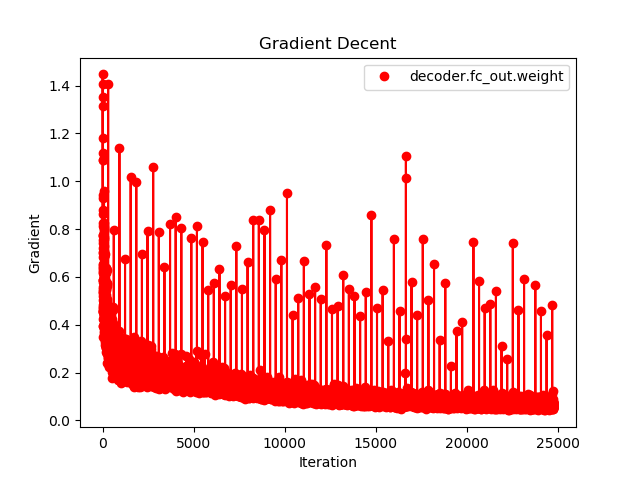

Below is the image of the last fc layer gradient norm. I am also clipping the gradient norm at value 2.

The question is, the stochastic gradient should decrease during training. right? The spikes in the gradients is normal or there something wrong with the training?