I have already test the same code on 1 node 2GPU. But the issue happens on 2 nodes * 2 GPU.

The python code shown as follows:

import torch.distributed as dist

from torch.nn.parallel import DistributedDataParallel as DDP

rank = int(os.environ["RANK"])

local_rank = int(os.environ["LOCAL_RANK"])

world_size = int(os.environ["WORLD_SIZE"])

dist.init_process_group(backend=backend)

device = torch.device("cuda:{}".format(local_rank))

model = NeuralNetwork().to(device) # copy model from cpu to gpu

# [*] using DistributedDataParallel

# model = DDP(model, device_ids=[local_rank], output_device=local_rank, broadcast_buffers=False, find_unused_parameters=True) # [*] DDP(...)

print('DDP starting')

model = DDP(model, broadcast_buffers=False, find_unused_parameters=True)

print('DDP ending')

The script on master node:

NCCL_P2P_DISABLE=1\

TOKENIZERS_PARALLELISM=True NCCL_DEBUG=INFO \

NCCL_DEBUG_SUBSYS=INIT,GRAPH NCCL_TOPO_DUMP_FILE=topo.xml \

python -m torch.distributed.launch \

--nproc_per_node 2 --nnodes 2 --node_rank 0 \

--master_addr="8.0.0.215" --master_port=8002 \

single-machine-and-multi-GPU-DistributedDataParallel-launch.py

The script on worker node:

NCCL_P2P_DISABLE=1\

TOKENIZERS_PARALLELISM=True NCCL_DEBUG=INFO \

NCCL_DEBUG_SUBSYS=INIT,GRAPH NCCL_TOPO_DUMP_FILE=topo.xml \

python -m torch.distributed.launch \

--nproc_per_node 2 --nnodes 2 --node_rank 1 \

--master_addr="8.0.0.215" --master_port=8002 \

single-machine-and-multi-GPU-DistributedDataParallel-launch.py

The terminal output for master hang up after:

(prompt) [root@gpu215 pytorch-multi-GPU-training-tutorial]# bash script/mult_master.sh

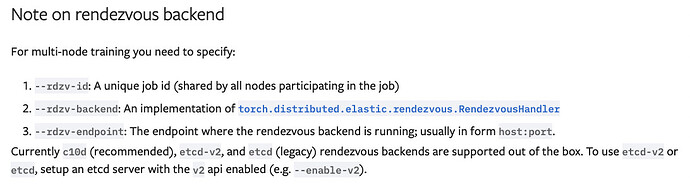

/root/miniconda3/envs/prompt/lib/python3.9/site-packages/torch/distributed/launch.py:181: FutureWarning: The module torch.distributed.launch is deprecated

and will be removed in future. Use torchrun.

Note that --use-env is set by default in torchrun.

If your script expects `--local-rank` argument to be set, please

change it to read from `os.environ['LOCAL_RANK']` instead. See

https://pytorch.org/docs/stable/distributed.html#launch-utility for

further instructions

warnings.warn(

WARNING:torch.distributed.run:

*****************************************

Setting OMP_NUM_THREADS environment variable for each process to be 1 in default, to avoid your system being overloaded, please further tune the variable for optimal performance in your application as needed.

*****************************************

DDP starting

DDP starting

gpu215:14096:14096 [0] NCCL INFO Bootstrap : Using ens6f0:8.0.0.215<0>

gpu215:14096:14096 [0] NCCL INFO NET/Plugin : No plugin found (libnccl-net.so), using internal implementation

gpu215:14096:14096 [0] NCCL INFO cudaDriverVersion 12010

NCCL version 2.14.3+cuda11.7

gpu215:14096:14191 [0] NCCL INFO NET/IB : No device found.

gpu215:14096:14191 [0] NCCL INFO NET/Socket : Using [0]ens6f0:8.0.0.215<0>

gpu215:14096:14191 [0] NCCL INFO Using network Socket

gpu215:14097:14097 [1] NCCL INFO cudaDriverVersion 12010

gpu215:14097:14097 [1] NCCL INFO Bootstrap : Using ens6f0:8.0.0.215<0>

gpu215:14097:14097 [1] NCCL INFO NET/Plugin : No plugin found (libnccl-net.so), using internal implementation

gpu215:14097:14208 [1] NCCL INFO NET/IB : No device found.

gpu215:14097:14208 [1] NCCL INFO NET/Socket : Using [0]ens6f0:8.0.0.215<0>

gpu215:14097:14208 [1] NCCL INFO Using network Socket

gpu215:14097:14208 [1] NCCL INFO KV Convert to int : could not find value of 'HygonGenuine' in dictionary, falling back to 0

gpu215:14097:14208 [1] NCCL INFO KV Convert to int : could not find value of 'HygonGenuine' in dictionary, falling back to 0

gpu215:14097:14208 [1] NCCL INFO KV Convert to int : could not find value of 'HygonGenuine' in dictionary, falling back to 0

gpu215:14097:14208 [1] NCCL INFO NCCL_P2P_LEVEL set by environment to LOC

gpu215:14097:14208 [1] NCCL INFO === System : maxBw 1.2 totalBw 24.0 ===

gpu215:14097:14208 [1] NCCL INFO CPU/2 (1/0/-1)

gpu215:14097:14208 [1] NCCL INFO + SYS[5000.0] - CPU/6

gpu215:14097:14208 [1] NCCL INFO + SYS[5000.0] - CPU/7

gpu215:14097:14208 [1] NCCL INFO + PCI[24.0] - GPU/41000 (0)

gpu215:14097:14208 [1] NCCL INFO CPU/6 (1/0/-1)

gpu215:14097:14208 [1] NCCL INFO + SYS[5000.0] - CPU/2

gpu215:14097:14208 [1] NCCL INFO + SYS[5000.0] - CPU/7

gpu215:14097:14208 [1] NCCL INFO + PCI[24.0] - GPU/C1000 (1)

gpu215:14097:14208 [1] NCCL INFO CPU/7 (1/0/-1)

gpu215:14097:14208 [1] NCCL INFO + SYS[5000.0] - CPU/2

gpu215:14097:14208 [1] NCCL INFO + SYS[5000.0] - CPU/6

gpu215:14097:14208 [1] NCCL INFO + PCI[3.0] - NIC/E1000

gpu215:14097:14208 [1] NCCL INFO + NET[1.2] - NET/0 (0/0/1.250000)

gpu215:14097:14208 [1] NCCL INFO ==========================================

gpu215:14097:14208 [1] NCCL INFO GPU/41000 :GPU/41000 (0/5000.000000/LOC) GPU/C1000 (3/24.000000/SYS) CPU/2 (1/24.000000/PHB) CPU/6 (2/24.000000/SYS) CPU/7 (2/24.000000/SYS) NET/0 (4/1.250000/SYS)

gpu215:14097:14208 [1] NCCL INFO GPU/C1000 :GPU/41000 (3/24.000000/SYS) GPU/C1000 (0/5000.000000/LOC) CPU/2 (2/24.000000/SYS) CPU/6 (1/24.000000/PHB) CPU/7 (2/24.000000/SYS) NET/0 (4/1.250000/SYS)

gpu215:14097:14208 [1] NCCL INFO NET/0 :GPU/41000 (4/1.250000/SYS) GPU/C1000 (4/1.250000/SYS) CPU/2 (3/1.250000/SYS) CPU/6 (3/1.250000/SYS) CPU/7 (2/1.250000/PHB) NET/0 (0/5000.000000/LOC)

gpu215:14097:14208 [1] NCCL INFO Setting affinity for GPU 1 to ff0000,00000000,00ff0000,00000000

gpu215:14097:14208 [1] NCCL INFO Pattern 4, crossNic 0, nChannels 1, bw 1.200000/1.200000, type SYS/SYS, sameChannels 1

gpu215:14097:14208 [1] NCCL INFO 0 : NET/0 GPU/0 GPU/1 NET/0

gpu215:14097:14208 [1] NCCL INFO Pattern 1, crossNic 0, nChannels 1, bw 2.400000/1.200000, type SYS/SYS, sameChannels 1

gpu215:14097:14208 [1] NCCL INFO 0 : NET/0 GPU/0 GPU/1 NET/0

gpu215:14096:14191 [0] NCCL INFO KV Convert to int : could not find value of 'HygonGenuine' in dictionary, falling back to 0

gpu215:14097:14208 [1] NCCL INFO Pattern 3, crossNic 0, nChannels 0, bw 0.000000/0.000000, type NVL/PIX, sameChannels 1

gpu215:14096:14191 [0] NCCL INFO KV Convert to int : could not find value of 'HygonGenuine' in dictionary, falling back to 0

gpu215:14096:14191 [0] NCCL INFO KV Convert to int : could not find value of 'HygonGenuine' in dictionary, falling back to 0

gpu215:14096:14191 [0] NCCL INFO NCCL_P2P_LEVEL set by environment to LOC

gpu215:14096:14191 [0] NCCL INFO === System : maxBw 1.2 totalBw 24.0 ===

gpu215:14096:14191 [0] NCCL INFO CPU/2 (1/0/-1)

gpu215:14096:14191 [0] NCCL INFO + SYS[5000.0] - CPU/6

gpu215:14096:14191 [0] NCCL INFO + SYS[5000.0] - CPU/7

gpu215:14096:14191 [0] NCCL INFO + PCI[24.0] - GPU/41000 (0)

gpu215:14096:14191 [0] NCCL INFO CPU/6 (1/0/-1)

gpu215:14096:14191 [0] NCCL INFO + SYS[5000.0] - CPU/2

gpu215:14096:14191 [0] NCCL INFO + SYS[5000.0] - CPU/7

gpu215:14096:14191 [0] NCCL INFO + PCI[24.0] - GPU/C1000 (1)

gpu215:14096:14191 [0] NCCL INFO CPU/7 (1/0/-1)

gpu215:14096:14191 [0] NCCL INFO + SYS[5000.0] - CPU/2

gpu215:14096:14191 [0] NCCL INFO + SYS[5000.0] - CPU/6

gpu215:14096:14191 [0] NCCL INFO + PCI[3.0] - NIC/E1000

gpu215:14096:14191 [0] NCCL INFO + NET[1.2] - NET/0 (0/0/1.250000)

gpu215:14096:14191 [0] NCCL INFO ==========================================

gpu215:14096:14191 [0] NCCL INFO GPU/41000 :GPU/41000 (0/5000.000000/LOC) GPU/C1000 (3/24.000000/SYS) CPU/2 (1/24.000000/PHB) CPU/6 (2/24.000000/SYS) CPU/7 (2/24.000000/SYS) NET/0 (4/1.250000/SYS)

gpu215:14096:14191 [0] NCCL INFO GPU/C1000 :GPU/41000 (3/24.000000/SYS) GPU/C1000 (0/5000.000000/LOC) CPU/2 (2/24.000000/SYS) CPU/6 (1/24.000000/PHB) CPU/7 (2/24.000000/SYS) NET/0 (4/1.250000/SYS)

gpu215:14096:14191 [0] NCCL INFO NET/0 :GPU/41000 (4/1.250000/SYS) GPU/C1000 (4/1.250000/SYS) CPU/2 (3/1.250000/SYS) CPU/6 (3/1.250000/SYS) CPU/7 (2/1.250000/PHB) NET/0 (0/5000.000000/LOC)

gpu215:14096:14191 [0] NCCL INFO Setting affinity for GPU 0 to ff0000,00000000,00ff0000

gpu215:14096:14191 [0] NCCL INFO Pattern 4, crossNic 0, nChannels 1, bw 1.200000/1.200000, type SYS/SYS, sameChannels 1

gpu215:14096:14191 [0] NCCL INFO 0 : NET/0 GPU/0 GPU/1 NET/0

gpu215:14096:14191 [0] NCCL INFO Pattern 1, crossNic 0, nChannels 1, bw 2.400000/1.200000, type SYS/SYS, sameChannels 1

gpu215:14096:14191 [0] NCCL INFO 0 : NET/0 GPU/0 GPU/1 NET/0

gpu215:14096:14191 [0] NCCL INFO Pattern 3, crossNic 0, nChannels 0, bw 0.000000/0.000000, type NVL/PIX, sameChannels 1

gpu215:14097:14208 [1] NCCL INFO Tree 0 : 0 -> 1 -> -1/-1/-1

gpu215:14097:14208 [1] NCCL INFO Tree 1 : 0 -> 1 -> -1/-1/-1

gpu215:14097:14208 [1] NCCL INFO Ring 00 : 0 -> 1 -> 2

gpu215:14096:14191 [0] NCCL INFO Tree 0 : -1 -> 0 -> 1/2/-1

gpu215:14097:14208 [1] NCCL INFO Ring 01 : 0 -> 1 -> 2

gpu215:14096:14191 [0] NCCL INFO Tree 1 : 2 -> 0 -> 1/-1/-1

gpu215:14097:14208 [1] NCCL INFO Trees [0] -1/-1/-1->1->0 [1] -1/-1/-1->1->0

gpu215:14096:14191 [0] NCCL INFO Channel 00/02 : 0 1 2 3

gpu215:14096:14191 [0] NCCL INFO Channel 01/02 : 0 1 2 3

gpu215:14096:14191 [0] NCCL INFO Ring 00 : 3 -> 0 -> 1

gpu215:14096:14191 [0] NCCL INFO Ring 01 : 3 -> 0 -> 1

gpu215:14096:14191 [0] NCCL INFO Trees [0] 1/2/-1->0->-1 [1] 1/-1/-1->0->2

gpu215:14096:14191 [0] NCCL INFO Channel 00/0 : 3[c1000] -> 0[41000] [receive] via NET/Socket/0

gpu215:14097:14208 [1] NCCL INFO Channel 00/0 : 1[c1000] -> 2[41000] [send] via NET/Socket/0

gpu215:14096:14191 [0] NCCL INFO Channel 01/0 : 3[c1000] -> 0[41000] [receive] via NET/Socket/0

gpu215:14096:14191 [0] NCCL INFO Channel 00 : 0[41000] -> 1[c1000] via SHM/direct/direct

gpu215:14096:14191 [0] NCCL INFO Channel 01 : 0[41000] -> 1[c1000] via SHM/direct/direct

gpu215:14097:14208 [1] NCCL INFO Channel 01/0 : 1[c1000] -> 2[41000] [send] via NET/Socket/0

gpu215:14097:14208 [1] NCCL INFO Connected all rings

gpu215:14097:14208 [1] NCCL INFO Channel 00 : 1[c1000] -> 0[41000] via SHM/direct/direct

gpu215:14097:14208 [1] NCCL INFO Channel 01 : 1[c1000] -> 0[41000] via SHM/direct/direct

gpu215:14096:14191 [0] NCCL INFO Connected all rings

gpu215:14096:14191 [0] NCCL INFO Channel 00/0 : 2[41000] -> 0[41000] [receive] via NET/Socket/0

gpu215:14096:14191 [0] NCCL INFO Channel 01/0 : 2[41000] -> 0[41000] [receive] via NET/Socket/0

gpu215:14096:14191 [0] NCCL INFO Channel 00/0 : 0[41000] -> 2[41000] [send] via NET/Socket/0

gpu215:14096:14191 [0] NCCL INFO Channel 01/0 : 0[41000] -> 2[41000] [send] via NET/Socket/0

The terminal output for worker node:

(prompt) [root@gpu216 pytorch-multi-GPU-training-tutorial]# bash script/mult_worker.sh

/root/miniconda3/envs/prompt/lib/python3.9/site-packages/torch/distributed/launch.py:181: FutureWarning: The module torch.distributed.launch is deprecated

and will be removed in future. Use torchrun.

Note that --use-env is set by default in torchrun.

If your script expects `--local-rank` argument to be set, please

change it to read from `os.environ['LOCAL_RANK']` instead. See

https://pytorch.org/docs/stable/distributed.html#launch-utility for

further instructions

warnings.warn(

WARNING:torch.distributed.run:

*****************************************

Setting OMP_NUM_THREADS environment variable for each process to be 1 in default, to avoid your system being overloaded, please further tune the variable for optimal performance in your application as needed.

*****************************************

DDP starting

DDP starting

gpu216:10445:10445 [0] NCCL INFO cudaDriverVersion 12010

gpu216:10445:10445 [0] NCCL INFO Bootstrap : Using ens16f0:8.0.0.216<0>

gpu216:10445:10445 [0] NCCL INFO NET/Plugin : No plugin found (libnccl-net.so), using internal implementation

gpu216:10445:10525 [0] NCCL INFO NET/IB : Using [0]mlx5_0:1/RoCE [RO]; OOB ens16f0:8.0.0.216<0>

gpu216:10445:10525 [0] NCCL INFO Using network IB

gpu216:10446:10446 [1] NCCL INFO cudaDriverVersion 12010

gpu216:10446:10446 [1] NCCL INFO Bootstrap : Using ens16f0:8.0.0.216<0>

gpu216:10446:10446 [1] NCCL INFO NET/Plugin : No plugin found (libnccl-net.so), using internal implementation

gpu216:10446:10536 [1] NCCL INFO NET/IB : Using [0]mlx5_0:1/RoCE [RO]; OOB ens16f0:8.0.0.216<0>

gpu216:10446:10536 [1] NCCL INFO Using network IB

gpu216:10446:10536 [1] NCCL INFO KV Convert to int : could not find value of 'HygonGenuine' in dictionary, falling back to 0

gpu216:10446:10536 [1] NCCL INFO KV Convert to int : could not find value of 'HygonGenuine' in dictionary, falling back to 0

gpu216:10446:10536 [1] NCCL INFO KV Convert to int : could not find value of 'HygonGenuine' in dictionary, falling back to 0

gpu216:10446:10536 [1] NCCL INFO NCCL_P2P_LEVEL set by environment to LOC

gpu216:10446:10536 [1] NCCL INFO === System : maxBw 1.2 totalBw 24.0 ===

gpu216:10446:10536 [1] NCCL INFO CPU/2 (1/0/-1)

gpu216:10446:10536 [1] NCCL INFO + SYS[5000.0] - CPU/6

gpu216:10446:10536 [1] NCCL INFO + SYS[5000.0] - CPU/0

gpu216:10446:10536 [1] NCCL INFO + PCI[24.0] - GPU/41000 (2)

gpu216:10446:10536 [1] NCCL INFO CPU/6 (1/0/-1)

gpu216:10446:10536 [1] NCCL INFO + SYS[5000.0] - CPU/2

gpu216:10446:10536 [1] NCCL INFO + SYS[5000.0] - CPU/0

gpu216:10446:10536 [1] NCCL INFO + PCI[24.0] - GPU/C1000 (3)

gpu216:10446:10536 [1] NCCL INFO CPU/0 (1/0/-1)

gpu216:10446:10536 [1] NCCL INFO + SYS[5000.0] - CPU/2

gpu216:10446:10536 [1] NCCL INFO + SYS[5000.0] - CPU/6

gpu216:10446:10536 [1] NCCL INFO + PCI[6.0] - NIC/1000

gpu216:10446:10536 [1] NCCL INFO + NET[1.2] - NET/0 (4e4ed33d194e5074/1/1.250000)

gpu216:10446:10536 [1] NCCL INFO ==========================================

gpu216:10446:10536 [1] NCCL INFO GPU/41000 :GPU/41000 (0/5000.000000/LOC) GPU/C1000 (3/24.000000/SYS) CPU/2 (1/24.000000/PHB) CPU/6 (2/24.000000/SYS) CPU/0 (2/24.000000/SYS) NET/0 (4/1.250000/SYS)

gpu216:10446:10536 [1] NCCL INFO GPU/C1000 :GPU/41000 (3/24.000000/SYS) GPU/C1000 (0/5000.000000/LOC) CPU/2 (2/24.000000/SYS) CPU/6 (1/24.000000/PHB) CPU/0 (2/24.000000/SYS) NET/0 (4/1.250000/SYS)

gpu216:10446:10536 [1] NCCL INFO NET/0 :GPU/41000 (4/1.250000/SYS) GPU/C1000 (4/1.250000/SYS) CPU/2 (3/1.250000/SYS) CPU/6 (3/1.250000/SYS) CPU/0 (2/1.250000/PHB) NET/0 (0/5000.000000/LOC)

gpu216:10446:10536 [1] NCCL INFO Setting affinity for GPU 1 to ff0000,00000000,00ff0000,00000000

gpu216:10445:10525 [0] NCCL INFO KV Convert to int : could not find value of 'HygonGenuine' in dictionary, falling back to 0

gpu216:10445:10525 [0] NCCL INFO KV Convert to int : could not find value of 'HygonGenuine' in dictionary, falling back to 0

gpu216:10445:10525 [0] NCCL INFO KV Convert to int : could not find value of 'HygonGenuine' in dictionary, falling back to 0

gpu216:10446:10536 [1] NCCL INFO Pattern 4, crossNic 0, nChannels 1, bw 1.200000/1.200000, type SYS/SYS, sameChannels 1

gpu216:10446:10536 [1] NCCL INFO 0 : NET/0 GPU/2 GPU/3 NET/0

gpu216:10446:10536 [1] NCCL INFO Pattern 1, crossNic 0, nChannels 1, bw 2.400000/1.200000, type SYS/SYS, sameChannels 1

gpu216:10446:10536 [1] NCCL INFO 0 : NET/0 GPU/2 GPU/3 NET/0

gpu216:10446:10536 [1] NCCL INFO Pattern 3, crossNic 0, nChannels 0, bw 0.000000/0.000000, type NVL/PIX, sameChannels 1

gpu216:10445:10525 [0] NCCL INFO NCCL_P2P_LEVEL set by environment to LOC

gpu216:10445:10525 [0] NCCL INFO === System : maxBw 1.2 totalBw 24.0 ===

gpu216:10445:10525 [0] NCCL INFO CPU/2 (1/0/-1)

gpu216:10445:10525 [0] NCCL INFO + SYS[5000.0] - CPU/6

gpu216:10445:10525 [0] NCCL INFO + SYS[5000.0] - CPU/0

gpu216:10445:10525 [0] NCCL INFO + PCI[24.0] - GPU/41000 (2)

gpu216:10445:10525 [0] NCCL INFO CPU/6 (1/0/-1)

gpu216:10445:10525 [0] NCCL INFO + SYS[5000.0] - CPU/2

gpu216:10445:10525 [0] NCCL INFO + SYS[5000.0] - CPU/0

gpu216:10445:10525 [0] NCCL INFO + PCI[24.0] - GPU/C1000 (3)

gpu216:10445:10525 [0] NCCL INFO CPU/0 (1/0/-1)

gpu216:10445:10525 [0] NCCL INFO + SYS[5000.0] - CPU/2

gpu216:10445:10525 [0] NCCL INFO + SYS[5000.0] - CPU/6

gpu216:10445:10525 [0] NCCL INFO + PCI[6.0] - NIC/1000

gpu216:10445:10525 [0] NCCL INFO + NET[1.2] - NET/0 (4e4ed33d194e5074/1/1.250000)

gpu216:10445:10525 [0] NCCL INFO ==========================================

gpu216:10445:10525 [0] NCCL INFO GPU/41000 :GPU/41000 (0/5000.000000/LOC) GPU/C1000 (3/24.000000/SYS) CPU/2 (1/24.000000/PHB) CPU/6 (2/24.000000/SYS) CPU/0 (2/24.000000/SYS) NET/0 (4/1.250000/SYS)

gpu216:10445:10525 [0] NCCL INFO GPU/C1000 :GPU/41000 (3/24.000000/SYS) GPU/C1000 (0/5000.000000/LOC) CPU/2 (2/24.000000/SYS) CPU/6 (1/24.000000/PHB) CPU/0 (2/24.000000/SYS) NET/0 (4/1.250000/SYS)

gpu216:10445:10525 [0] NCCL INFO NET/0 :GPU/41000 (4/1.250000/SYS) GPU/C1000 (4/1.250000/SYS) CPU/2 (3/1.250000/SYS) CPU/6 (3/1.250000/SYS) CPU/0 (2/1.250000/PHB) NET/0 (0/5000.000000/LOC)

gpu216:10445:10525 [0] NCCL INFO Setting affinity for GPU 0 to ff0000,00000000,00ff0000

gpu216:10445:10525 [0] NCCL INFO Pattern 4, crossNic 0, nChannels 1, bw 1.200000/1.200000, type SYS/SYS, sameChannels 1

gpu216:10445:10525 [0] NCCL INFO 0 : NET/0 GPU/2 GPU/3 NET/0

gpu216:10445:10525 [0] NCCL INFO Pattern 1, crossNic 0, nChannels 1, bw 2.400000/1.200000, type SYS/SYS, sameChannels 1

gpu216:10445:10525 [0] NCCL INFO 0 : NET/0 GPU/2 GPU/3 NET/0

gpu216:10445:10525 [0] NCCL INFO Pattern 3, crossNic 0, nChannels 0, bw 0.000000/0.000000, type NVL/PIX, sameChannels 1

gpu216:10446:10536 [1] NCCL INFO Tree 0 : 2 -> 3 -> -1/-1/-1

gpu216:10445:10525 [0] NCCL INFO Tree 0 : 0 -> 2 -> 3/-1/-1

gpu216:10446:10536 [1] NCCL INFO Tree 1 : 2 -> 3 -> -1/-1/-1

gpu216:10445:10525 [0] NCCL INFO Tree 1 : -1 -> 2 -> 3/0/-1

gpu216:10446:10536 [1] NCCL INFO Ring 00 : 2 -> 3 -> 0

gpu216:10445:10525 [0] NCCL INFO Ring 00 : 1 -> 2 -> 3

gpu216:10446:10536 [1] NCCL INFO Ring 01 : 2 -> 3 -> 0

gpu216:10445:10525 [0] NCCL INFO Ring 01 : 1 -> 2 -> 3

gpu216:10446:10536 [1] NCCL INFO Trees [0] -1/-1/-1->3->2 [1] -1/-1/-1->3->2

gpu216:10445:10525 [0] NCCL INFO Trees [0] 3/-1/-1->2->0 [1] 3/0/-1->2->-1

gpu216:10445:10525 [0] NCCL INFO Channel 00/0 : 1[c1000] -> 2[41000] [receive] via NET/IB/0

gpu216:10446:10536 [1] NCCL INFO Channel 00/0 : 3[c1000] -> 0[41000] [send] via NET/IB/0

gpu216:10445:10525 [0] NCCL INFO Channel 01/0 : 1[c1000] -> 2[41000] [receive] via NET/IB/0

gpu216:10445:10525 [0] NCCL INFO Channel 00 : 2[41000] -> 3[c1000] via SHM/direct/direct

gpu216:10445:10525 [0] NCCL INFO Channel 01 : 2[41000] -> 3[c1000] via SHM/direct/direct

gpu216:10446:10536 [1] NCCL INFO Channel 01/0 : 3[c1000] -> 0[41000] [send] via NET/IB/0

gpu216:10446:10536 [1] NCCL INFO Connected all rings

gpu216:10446:10536 [1] NCCL INFO Channel 00 : 3[c1000] -> 2[41000] via SHM/direct/direct

gpu216:10446:10536 [1] NCCL INFO Channel 01 : 3[c1000] -> 2[41000] via SHM/direct/direct

As you can see, The output hang up after

print('DDP starting')

According to the nvidia-smi and top, the model has already been load into 4gpus. And the cpu utility is greater than 100%.

nvidia-smi terminal output:

Every 1.0s: nvidia-smi gpu216: Thu Apr 13 15:02:28 2023

Thu Apr 13 15:02:29 2023

+---------------------------------------------------------------------------------------+

| NVIDIA-SMI 530.30.02 Driver Version: 530.30.02 CUDA Version: 12.1 |

|-----------------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|=========================================+======================+======================|

| 0 NVIDIA A800 80GB PCIe Off| 00000000:41:00.0 Off | 0 |

| N/A 33C P0 66W / 300W| 1845MiB / 81920MiB | 0% Default |

| | | Disabled |

+-----------------------------------------+----------------------+----------------------+

| 1 NVIDIA A800 80GB PCIe Off| 00000000:C1:00.0 Off | 0 |

| N/A 32C P0 63W / 300W| 943MiB / 81920MiB | 0% Default |

| | | Disabled |

+-----------------------------------------+----------------------+----------------------+

+---------------------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=======================================================================================|

| 0 N/A N/A 3948 G /usr/libexec/Xorg 4MiB |

| 0 N/A N/A 10445 C ...t/miniconda3/envs/prompt/bin/python 936MiB |

| 0 N/A N/A 10446 C ...t/miniconda3/envs/prompt/bin/python 902MiB |

| 1 N/A N/A 3948 G /usr/libexec/Xorg 4MiB |

| 1 N/A N/A 10446 C ...t/miniconda3/envs/prompt/bin/python 936MiB |

+---------------------------------------------------------------------------------------+

top terminal output:

top - 15:04:49 up 43 min, 1 user, load average: 4.28, 4.29, 4.10Tasks: 1428 total, 4 running, 1424 sleeping, 0 stopped, 0 zombie

%Cpu(s): 2.0 us, 1.4 sy, 0.0 ni, 96.6 id, 0.0 wa, 0.0 hi, 0.0 si, 0.0 stMiB Mem : 128545.8 total, 115598.2 free, 8534.6 used, 4412.9 buff/cache

MiB Swap: 4096.0 total, 4096.0 free, 0.0 used. 118872.3 avail Mem

PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND 10445 root 20 0 14.1g 2.0g 474996 R 300.3 1.6 106:59.50 python

10446 root 20 0 16.5g 2.6g 581368 R 100.3 2.0 35:45.30 python 4032 root 20 0 124848 22064 6520 S 2.6 0.0 0:21.90 pmdalinux

5645 root 20 0 969240 94652 37984 S 1.3 0.1 0:09.48 node 6911 root 20 0 276884 6648 4096 R 1.3 0.0 0:32.89 top

5755 root 20 0 1080092 193328 43860 S 1.0 0.1 0:15.05 node

3183 root 20 0 125816 6444 4856 S 0.7 0.0 0:05.43 irqbalance

3374 root 20 0 780008 46344 19136 S 0.7 0.0 0:17.67 tuned

4025 root 20 0 115124 12768 6864 S 0.7 0.0 0:11.23 pmdaproc

So… what happened? Anything I can do for checking this?