Hello,

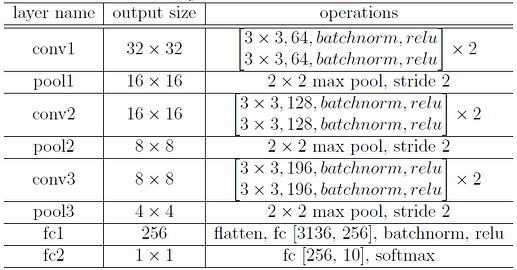

I am trying to program an 8-layered toy network that accepts a 32 x 32 colored image into Pytorch by following the table, but I get an error when the input to the first f.c. layer has incorrect number of in_features (1x784 as opposed to 1x3136).

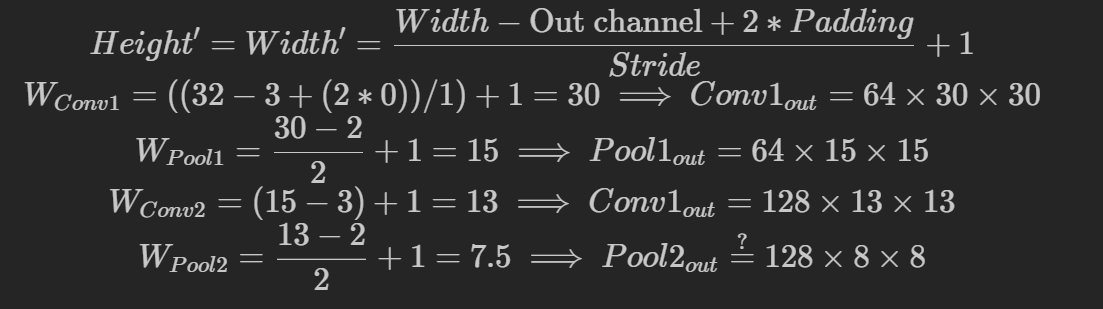

Can someone point out what I missed? I followed the formula:

But in reality, torch gives me ![]() , which I am confused about because it does not follow the formula.

, which I am confused about because it does not follow the formula.

Either method does not lead to the desired output, which is ![]() , and would give me the correct

, and would give me the correct in_features for the 1st fc layer.

Hoping that someone can help me find my mistake. I have attached the table and my code below. Thank you.

And here is my code:

import torch

import torch.nn as nn

import torch.nn.functional as F

class Net(nn.Module):

def __init__(self, max_epochs = 10, learning_rate=0.01, num_classes=10):

super(Net, self).__init__()

# Model

self.conv1 = nn.Conv2d(in_channels=3, out_channels=64, kernel_size=3, stride=1)

self.conv1_bn = nn.BatchNorm2d(64)

self.conv2 = nn.Conv2d(64, 128, 3, 1)

self.conv2_bn = nn.BatchNorm2d(128)

self.conv3 = nn.Conv2d(128, 196, 3, 1)

self.conv3_bn = nn.BatchNorm2d(196)

self.fc1 = nn.Linear(in_features=3136, out_features=256)

self.fc1_bn = nn.BatchNorm1d(256)

self.fc2 = nn.Linear(256, num_classes)

def forward(self, x):

x = self.conv1(x)

x = self.conv1_bn(x)

x = F.relu(x)

x = F.max_pool2d(x, kernel_size=2, stride=2)

# Outputs 1 x 64 x 15 x 15

x = self.conv2(x)

x = self.conv2_bn(x)

# Outputs 1 x 128 x 13 x 13

x = F.relu(x)

x = F.max_pool2d(x, kernel_size=2, stride=2)

# Outputs 1 x 128 x 6 x 6, but shouldn't it be 1 x 128 x 8 x 8 ?

x = self.conv3(x)

x = self.conv3_bn(x)

# Outputs 1 x 196 x 4 x 4

x = F.relu(x)

x = F.max_pool2d(x, kernel_size=2, stride=2)

# Outputs 1 x 196 x 2 x 2

x = torch.flatten(x, start_dim=1)

# Outputs: 1 x 784; Expected: 1 x 3136 = ( 196 x 4 x 4)

x = self.fc1(x)

x = self.fc1_bn(x)

x = F.relu(x)

return F.softmax(self.fc2(x), dim=1)

# Test code

random_data = torch.rand((1, 3, 32, 32))

my_nn = Net()

result = my_nn(random_data)

print (result)