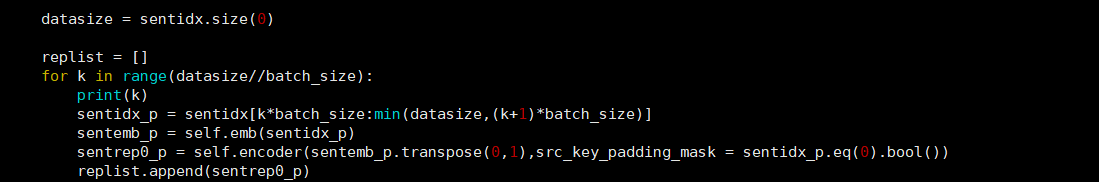

I have about 8000 sentences and i try to calculate their representation with transformer. I firstly fed the 8000 sentences into the transformer at the same time but it reported “CUDA out of memory”. Then i fed 32 sentences into transformer one time to recurrently process these sentences, but again it reported this error: I only post code of this part below(note sentidx is a tensor of shape 8000*47,batch_size = 32, self.emb is the nn.Embedding module. self.encoder is the standard transformer module provided by torch.nn. When k=54, it will report CUDA out of memory. Does anyone have suggenstion?