Good afternoon. Help to combine the two chedulers (I can’t do it) ReduceLROnPlateau + OneCycleLR (CosineAnnealingLR)

optimizer = torch.optim.Adam(model.parameters(), lr=LR)

scheduler = torch.optim.lr_scheduler.ReduceLROnPlateau(

optimizer,

factor=0.7,

patience=scheduler_N,

threshold=1e-10,

verbose=True)

LR_shed =

max_lr = LR/0.7

for epoch in range(model_epoch_start, model_epoch_end):

scheduler_b = torch.optim.lr_scheduler.OneCycleLR(

optimizer,

max_lr=max_lr,

total_steps=len(train),

pct_start=0.3,

cycle_momentum=False)

for x, y in train:

…

model.train()

…

model.eval()

…

LR_shed.append(optimizer.param_groups[0][‘lr’])

scheduler_b.step()

…

scheduler.step(loss_val)

max_lr = scheduler._last_lr[0]/0.7

…

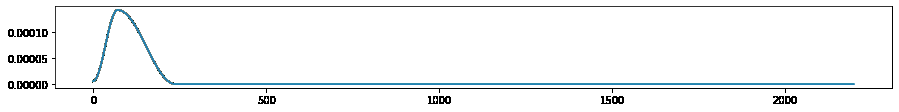

plt.plot(LR_shed)

work example: ReduceLROnPlateau + OneCycleLR

Help me