I’ve got some images I have pre-processed to do contrast adjustment and normalization. After the pre-processing, things look okay, but I’ve seen that the images returned from the dataloader (when I convert to tensor float32) don’t usually match what I saw before they were cast to tensors. The image is size 3xHxW, where the middle channel is actual raw image with the contrasting and the other two channels are random noise versions of that channel.

The contrast adjustment used is:

skimage.exposure.equalize_adapthist(image[, …]) Contrast Limited Adaptive Histogram Equalization (CLAHE).

which returns float64 output, then I normalize that and save it to a pickle file for loading later.

Code snippet to show histograms

img = pickle.load( open( fname, "rb" ) )

print('initial image type is ',type(img[1,0,0]))

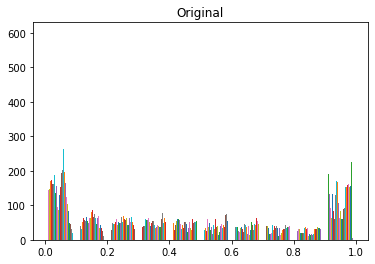

plt.figure()

plt.hist(img[1,:,:])

plt.title('Original')

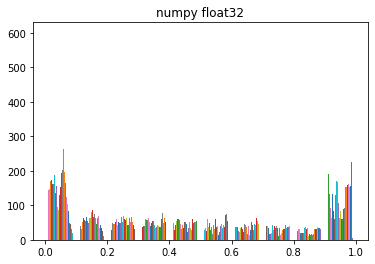

img2=img.astype(np.float32)

plt.figure()

plt.hist(img2[1,:,:])

plt.title('numpy float32')

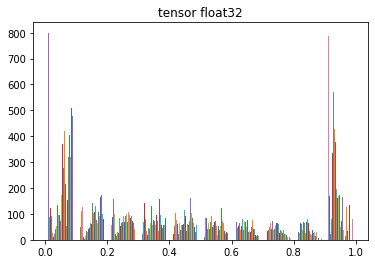

img = torch.as_tensor(img, dtype=torch.float32)

plt.figure()

plt.hist(img[1,:,:])

plt.title('tensor float32')

output:

initial image type is <class ‘numpy.float64’>

I can guess that going from float64 to float32 might have to clip some numbers, if I had any in the range above float32, but I didn’t have any of those. Since the range between numbers might be less, could things be collapsing together onto the closest number in float32? If so, why does it only change for numpy float32 to a tensor and is not readily visible from numpy float64 to float32?