Hi, i keep getting Cuda out of memory errors, i have a 3090 with 24gb of vram, cuda only allocates 7gb, 15gb is always free.

RuntimeError: CUDA out of memory. Tried to allocate 92.00 MiB (GPU 0; 24.00 GiB total capacity; 6.90 GiB already allocated; 14.90 GiB free; 6.98 GiB reserved in total by PyTorch) If reserved memory is >> allocated memory try setting max_split_size_mb to avoid fragmentation. See documentation for Memory Management and PYTORCH_CUDA_ALLOC_CONF

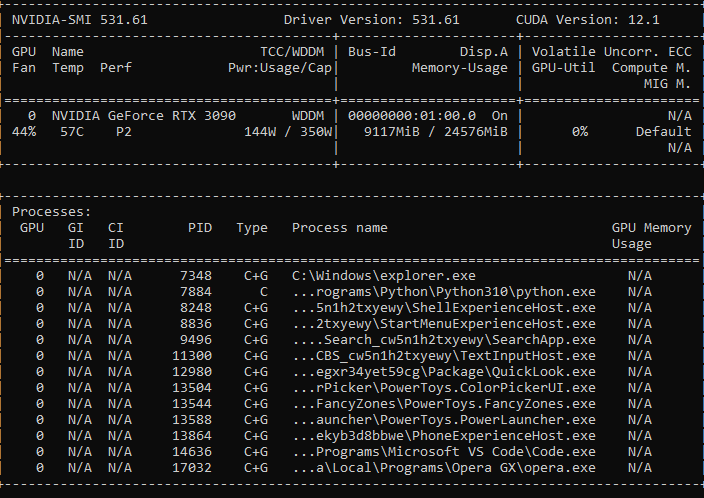

i also checked nvidia smi if any other process was taking up memory, found none

this was peak mem usage before it gave me the error

any help?