Hi,

I have no idea how to build a recurrent layer which looks like this and the autograd can run smoothly.

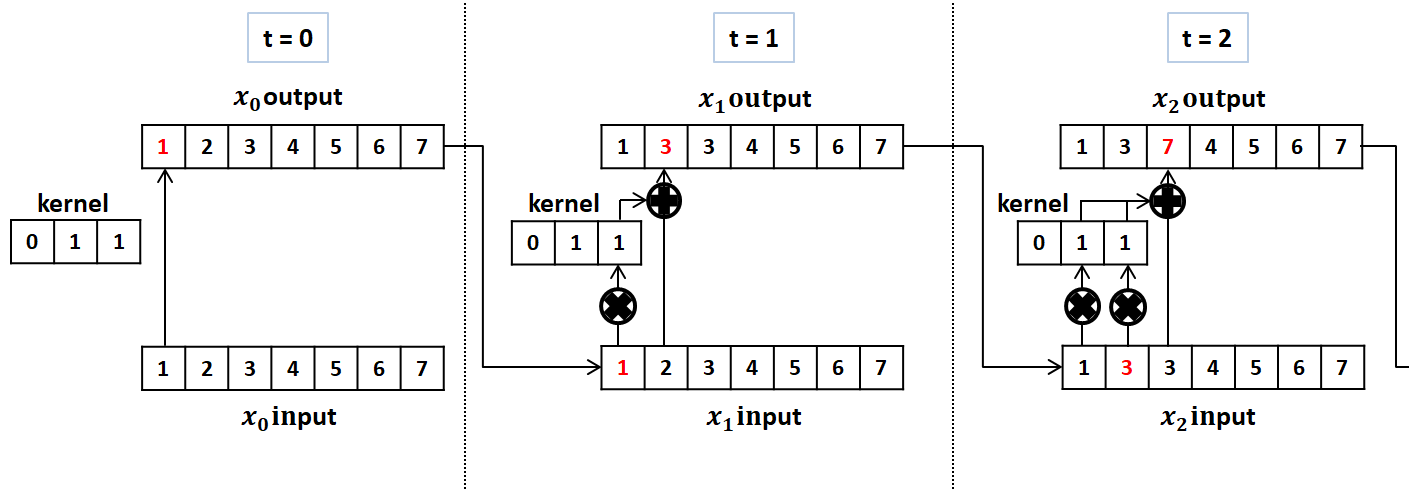

x is a 1-d tensor create by previous layer, in each time step t, the kernel will stride one step and do convolution with x until time_step = len(x), the result of the convolution becomes one value and add with xt, xt should be update by each time step, then becomes the input of next time step.

After the convolutional recurrent layer, for the autograd part, generate data x and real data only use L1loss (which means real data - x).

Thanks in advance,

Zeyilain