Hello,

this question might sound strange but I couldn’t find information about my use case.

My goal is to combine two different neural networks. The first one is running, it gets an image and classifies it. Now I want to feed this output into another network in order to classify a subcategory of this image.

Concrete this means that the first net can classify a buch of flowers and trees, e.g Rose or Palm Tree. The next net should now classify any kind of tree as “tree” and any kind of flower as "flower.

I have got the labels and the output-tensors but the problem is, that these tensors are no images, so they have only 3 dimensions, since they are missing size parameters.

Now I don’t know if there is a more generic net structure to provide just the training on tensors without the image dimensions because I think that convolutional networks are not the right approach.

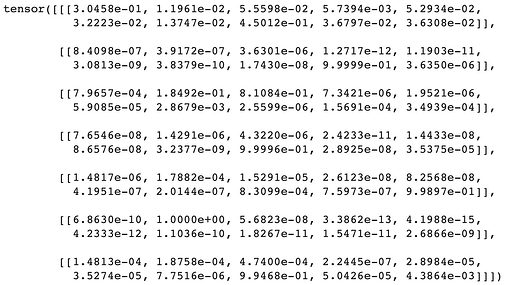

I wrote a data loader to provide inputs and lables in the following structure:

Those are seven output tensors, that should be represented in two different classes.

In this case, all of the input tensors are flowers, so the labels are structured as flowers:

![]()

I defined flowers as 0 and trees as 1.

If I try to feed this input into a pre-trained model, it is missing a dimension, my own networks are also failing and I wonder where to start.

Thank you very much in advance.