I’m using transfer learning to classify binary medical MRI brain images. I used 5-fold cross validation, SGD optimizer and scheduler with different learning rate with 1e-4 and 1e-5 to get the following results. The learning curve and the accuracy have weird behavior and I don’t know how to interpret and improve. Please help me indicate the problems and the things to try on.

The optimizer and scheduler settings I used:

params = [

{ 'params': parameters['base_parameters'], 'lr': sets.learning_rate },

{ 'params': parameters['new_parameters'], 'lr': sets.learning_rate }

]

optimizer = torch.optim.SGD(params=params, momentum=0.9, weight_decay=1e-4)

scheduler = optim.lr_scheduler.ExponentialLR(optimizer, gamma=0.95)

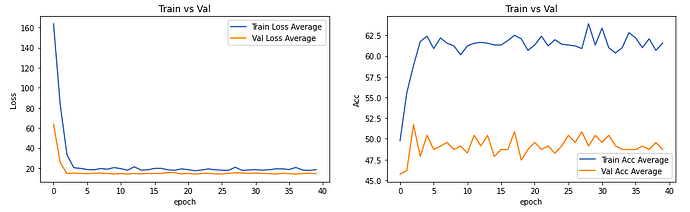

Averaged learning curve and accuracy results with lr = 1e-4(average over 5 folds):

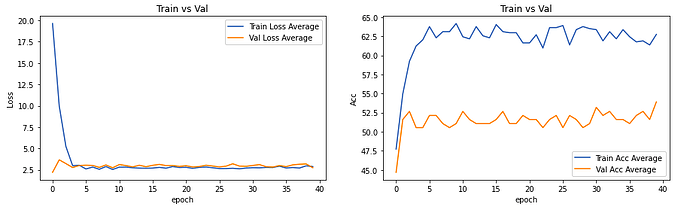

Averaged learning curve and accuracy results with lr = 1e-5:

So I have a few questions regarding the results.

- Will the result be different if I change from SGD to Adams with the same learning rate?

- In

lr = 1e-4situation, the model predicts single class mostly. As I searched in the forum, people said with lower learning might save the issue and indeed withlr = 1e-5, the model gives more diverse results (still poor accuracy). However, the train/validation accuracy stops improving and yet fluctuating after certain epochs. What might be the reason for this? - The loss curve stops decreasing around 2.5, but in normal situations, isn’t the loss value lower than 1? Why is my loss value stops at 2.5?

- The data augmentation method I used was randomly select the brain regions during training (i.e. some regions might be ignored). Will this cause the accuracy not improving as shown in the curve? Or should I use other data augmentation method (e.g. train on the whole brain with rotations)?

It might be complicated questions to answer, but i would be so appreciated if anyone could help me with this. Thank you!!