I have 3 Tesla V100(16 GB). I am doing transfer learning using efficeint net (63 Million parameters) on images of (512,512) with a batch size of 20.

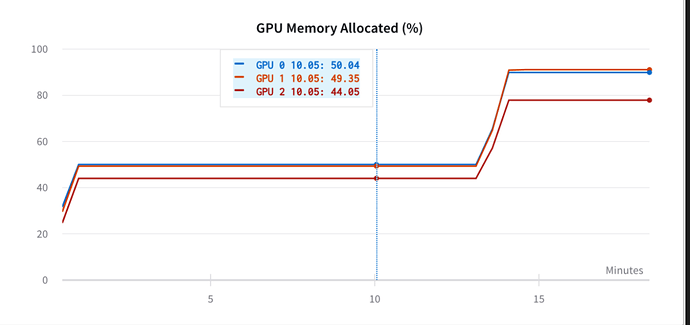

My GPU memory utilisation is below -

As you can see, it has almost filled up all the 3 GPUs(almost 80%).

My question is is there any theoretical way of calculating that the utilisation of GPU memory being shown is what is required by the model at a certain image and batch size or is there a memory leak in my GPU?