Hi there! I am currently working on a skin cancer classification problem. The data consists of 9 target values and the model is trained with densenet for 20 epochs. The model file has been saved and the evaluation metrics are observed. But I want to have confidence on what I am seeing and proceed in creating a flask application and go straight to testing. The highest training accuracy is 97% and validation accuracy is 87%. Does that mean my model is evaluating well? This is the value counts of each label after sampling weights.

AK 8503

VASC 8496

unknown 8493

nevus 8450

melanoma 8410

DF 8400

SCC 8245

BCC 8208

BKL 7443

This is the best record of my model file.

best record: [epoch 11], [val loss 0.40036], [val acc 0.87697]

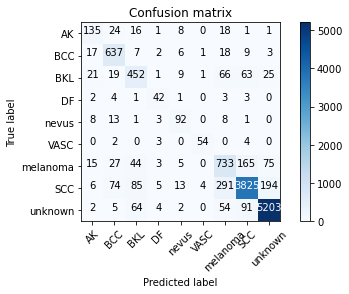

Here is the confusion matrix, the classification report

precision recall f1-score support

AK 0.66 0.66 0.66 204

BCC 0.79 0.91 0.85 700

BKL 0.67 0.69 0.68 657

DF 0.66 0.75 0.70 56

nevus 0.68 0.73 0.70 126

VASC 0.90 0.86 0.88 63

melanoma 0.62 0.69 0.65 1067

SCC 0.92 0.85 0.88 4497

unknown 0.95 0.96 0.95 5425

accuracy 0.87 12795

macro avg 0.76 0.79 0.77 12795

weighted avg 0.88 0.87 0.87 12795

Notice in the confusion matrix, the SCC label is incorrectly predicted as melanoma 291 times! This made me take a step back and question my model again as it was a little freaky.

I would really appreciate anyone who can give me a general observation on the model. This would help me make any changes for better results! Thanks a lot for the help.