Hello teachers.

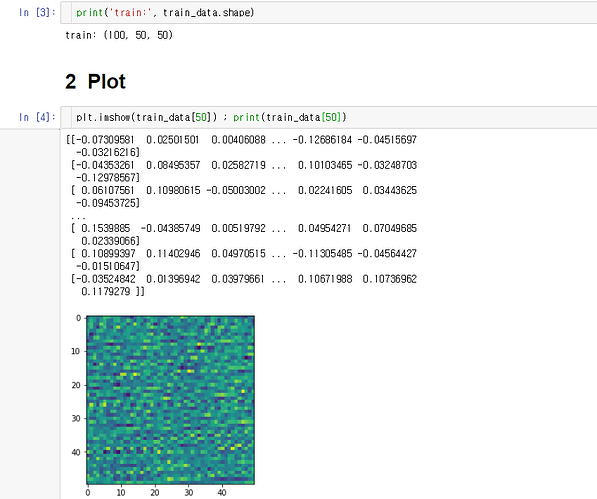

Before making pytorch dataset and dataloader, i created wavelet transformed image from signal.

type : numpy array

dimension : (100,50,50), 100 images with 50x50

value : around -0.01~0.01

after loading npy.file with np.load function,

Please help me

Have a good day!

You don’t have to use regular images in your Dataset so your custom numpy arrays should be fine.

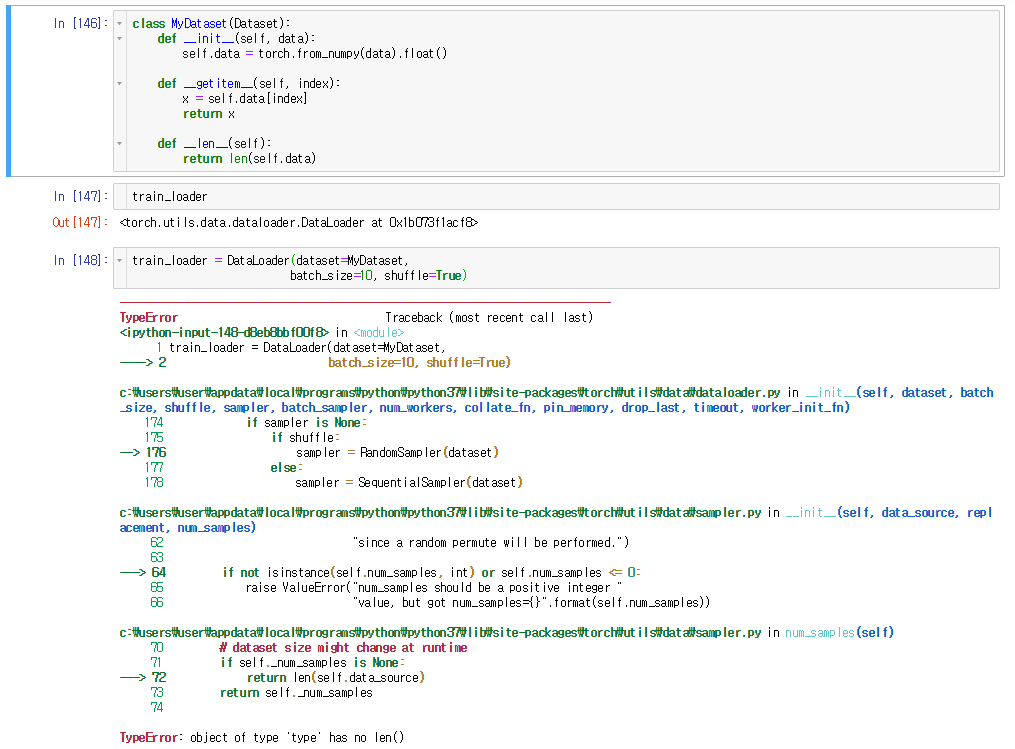

class MyDataset(Dataset):

def __init__(self, data):

self.data = torch.from_numpy(data).float()

def __getitem__(self, index):

x = self.data[index]

return x

def __len__(self):

return len(self.data)

I’m not sure, what your targets are, so you might want to pass them additionally to the Dataset or calculate them in __getitem__.

1 Like

Hi Ptrblck,

Error says : object of type ‘type’ has no len()

The thing that i wanna do with this is, inputting images with 50x50 size and

I’m wondering i need to resize (100,30,30) into (100,1,30,30)… because input dimension is comprised of (batchsize, channel, height, width) in every the PyTorch Tutorials.

Thanks again!!

tom

July 19, 2019, 12:00pm

4

You want to pass an instance of the dataset to the dataloader constructor, not the type.

Best regards

Thomas

Thank you very much, tom,

but im afraid that i still don’t know how to modify the code for passing an instance for this case…

Regards,

David

i solved with dataset=MyDataset()

Thank you very much

![]()