Hi, I am trying to obtain validation loss alongside training loss, but it only prints training loss and ignores validation loss. It doesn’t show any error when I call the function but it doesn’t print validation loss

Here is the code,

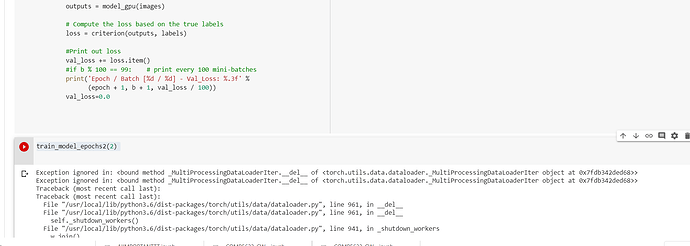

def train_model_epochs2(num_epochs):

for epoch in range(num_epochs):

running_loss = 0.0

for i, data in enumerate(train_loader, 0):

images, labels = data

# Explicitly specifies that data is to be copied onto the device!

images = images.to(device) #

labels = labels.to(device) #

optimizer.zero_grad()

outputs = model_gpu(images)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

running_loss += loss.item()

if i % 100 == 99: # print every 1000 mini-batches

print('Epoch / Batch [%d / %d] - Loss: %.3f' %

(epoch + 1, i + 1, running_loss / 100))

running_loss = 0.0

val_loss = 0

for b, vdata in enumerate(valid_loader, 0):

with torch.no_grad():

images, labels = vdata

images = images.to(device) #

labels = labels.to(device) #

outputs = model_gpu(images)

# Compute the loss based on the true labels

loss = criterion(outputs, labels)

#Print out loss

val_loss += loss.item()

if b % 100 == 99: # print every 100 mini-batches

print('Epoch / Batch [%d / %d] - Val_Loss: %.3f' %

(epoch + 1, b + 1, val_loss / 100))

val_loss=0.0