![]()

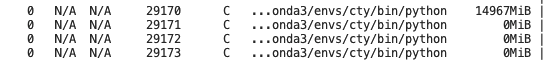

today I change a server to train my network, but the display of video usage confuse me a lot.

what’s wrong with my code(which part of code should I provide?), and how can I fix it? thanks for your questions and replies!

Could you explain a bit more what issue you are seeing, please?

thanks for your reply, I trained my networks with DDP by 4 cards. As the figure and the command nvidia-smi shows. I have another 3 processes on each card, but their video memory usage is 0. BTW, the same code runs on the other server with 8 A5000 GPUS is normal works without this performance

.

Do you see any training progress on this machine? If so, could you print the .device attribute of some tensors to check if your script is using all GPUs?

I guess it is not the problem caused by some tensors, because of the 0 video memory usage. I think it is caused by some initial settings, such as torch.cuda.set_decice().

if I use N cards I have N-1 processes which has 0 video memory usage.