Hi all ![]()

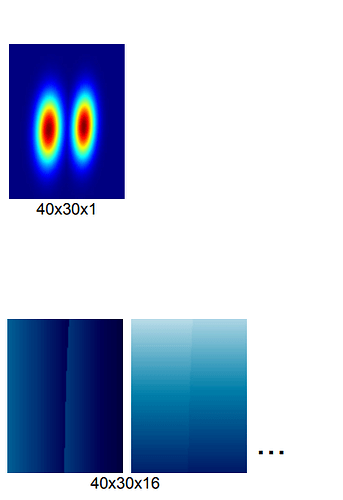

Am trying to implement this paper: https://arxiv.org/pdf/2003.03522.pdf where they employ a detection head to estimate the objects normal distribution, and a regression head to estimate the offset vectors of the objects keypoints. Examplified here:

Have a hard time implementing the regression loss: l1_loss(offset(p) + p - ground_truth(K_n)) where K_n is the nth keypoint, offset(p) is the offset vectors to p which is the centroid.

I have the offset vectors and centroid p, however during the loss function there are possibly several p which need to be added to the offset vectors. So how would I go about this and avoid for-loops?

My current implementation now looks like this:

class Loss(nn.Module):

def __init__(self):

super(Loss, self).__init__()

def forward(self, vector_preds, vector_targets, gauss_preds, gauss_targets):

vector_targets = vector_targets.permute(0, 2, 3, 1)

gauss_targets = gauss_targets.permute(0, 2, 3, 1)

vector_preds = vector_preds.permute(0, 2, 3, 1)

gauss_preds = gauss_preds.permute(0, 2, 3, 1)

E = 0.9

gauss_mask = gauss_targets > E

# only want to calculate offsets from centroids (gauss_target > 0.9)

masked_vector_preds = gauss_mask * vector_preds

masked_vector_targets = gauss_mask * vector_targets

vector_loss = F.l1_loss(masked_vector_preds, masked_vector_targets)

gauss_loss = F.mse_loss(gauss_preds, gauss_targets)

loss = vector_loss + gauss_loss

return loss, vector_loss, gauss_loss

However, am unsure if i am not just minimizing the vectors now, therefore not taking into account the centroid(s) of the object. Or if, in this case, that even matters?

Hope you can help