I’m currently in the process of building a BERT language model from scratch for educational purposes. While constructing the model itself was a smooth journey, I encountered challenges in creating the data processing pipeline, particularly with an issue that has me stuck.

Overview:

I am working with the IMDB dataset, treating each review as a document. Each document can be segmented into several sentences using punctuation marks (. ! ?). Each data sample consists of a sentence A, a sentence B, and an is_next label indicating whether the two sentences are consecutive. This implies that from each document (review), I can generate multiple training samples.

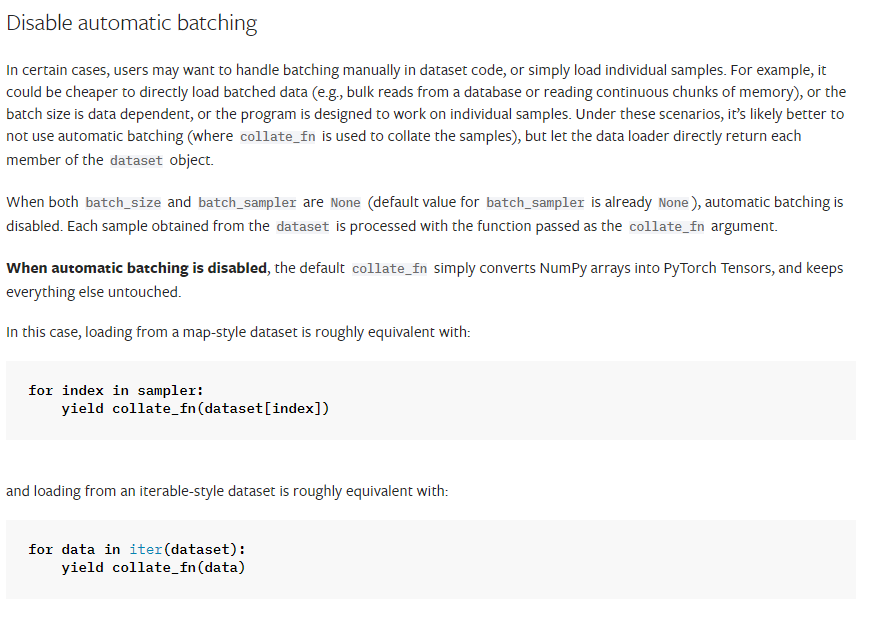

I am utilizing PyTorch and attempting to leverage the DataLoader for handling multiprocessing and parallelism.

The Problem:

The __getitem__ method in the Dataset class is designed to return a single training sample for each index. However, in my scenario, each index references a document (review), and an undefined number of training samples may be generated for each index.

The Question:

Is there a recommended way to handle such a situation? Alternatively, I am considering the following approach:

For each index, an undefined number of samples are returned to the DataLoader. The DataLoader would then assess whether the number of samples is sufficient to form a batch. Here are the three possible cases:

- The number of samples returned for an index is less than the batch size. In this case, the

DataLoaderfetches additional samples from the next index (next document), and any excess is retained to form the next batch. - The number of samples returned for an index equals the batch size, and it passes it to the model.

I appreciate any guidance or insights into implementing this dynamic data sampling approach with PyTorch DataLoader.