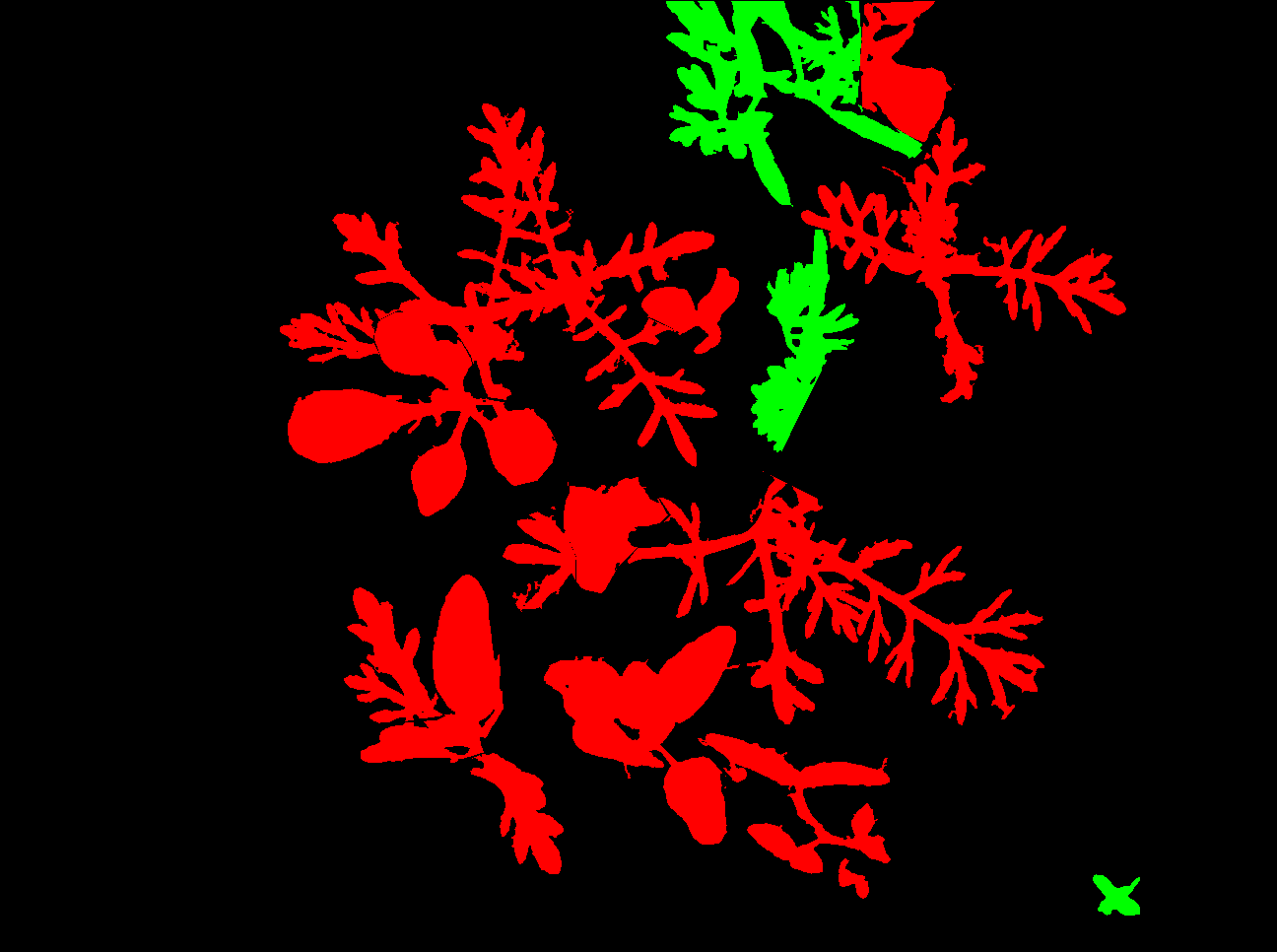

Hi , I am using SegNet for image segmentation.

But , I am getting error :

Iter:1

x torch.Size([1, 3, 256, 256])

conv1 torch.Size([1, 64, 256, 256])

layer1 torch.Size([1, 256, 256, 256])

layer2 torch.Size([1, 512, 256, 256])

layer3 torch.Size([1, 1024, 256, 256])

layer4 torch.Size([1, 2048, 256, 256])

/opt/anaconda/lib/python3.6/site-packages/torch/nn/modules/upsampling.py:180: UserWarning: nn.UpsamplingBilinear2d is deprecated. Use nn.Upsample instead.

warnings.warn("nn.UpsamplingBilinear2d is deprecated. Use nn.Upsample instead.")

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<ipython-input-75-a24d68a5b61a> in <module>()

8 targets = Variable(labels)

9

---> 10 outputs = model(inputs)

11 optimizer.zero_grad()

12 print("outputs size ==> ",outputs.size())

/opt/anaconda/lib/python3.6/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

323 for hook in self._forward_pre_hooks.values():

324 hook(self, input)

--> 325 result = self.forward(*input, **kwargs)

326 for hook in self._forward_hooks.values():

327 hook_result = hook(self, input, result)

<ipython-input-7-8f80fe0445f5> in forward(self, x)

60 self.layer5c(x),

61 self.layer5d(x),

---> 62 ], 1))

63

64 print('final', x.size())

RuntimeError: inconsistent tensor sizes at /opt/conda/conda-bld/pytorch_1513368888240/work/torch/lib/TH/generic/THTensorMath.c:2864

This is my network code :

class PSPNet(nn.Module):

def __init__(self, num_classes):

#super(PSPNet,self).__init__()

super().__init__()

resnet = models.resnet101(pretrained=True)

self.conv1 = resnet.conv1

self.layer1 = resnet.layer1

self.layer2 = resnet.layer2

self.layer3 = resnet.layer3

self.layer4 = resnet.layer4

for m in self.modules():

if isinstance(m, nn.Conv2d):

m.stride = 1

m.requires_grad = False

if isinstance(m, nn.BatchNorm2d):

m.requires_grad = False

self.layer5a = PSPDec(2048, 512, 60)

self.layer5b = PSPDec(2048, 512, 30)

self.layer5c = PSPDec(2048, 512, 20)

self.layer5d = PSPDec(2048, 512, 10)

self.final = nn.Sequential(

nn.Conv2d(2048, 512, 3, padding=1, bias=False),

nn.BatchNorm2d(512, momentum=.95),

nn.ReLU(inplace=True),

nn.Dropout(.1),

nn.Conv2d(512, num_classes, 1),

)

def forward(self, x):

print('x', x.size())

x = self.conv1(x)

print('conv1', x.size())

x = self.layer1(x)

print('layer1', x.size())

x = self.layer2(x)

print('layer2', x.size())

x = self.layer3(x)

print('layer3', x.size())

x = self.layer4(x)

print('layer4', x.size())

x = self.final(torch.cat([

x,

self.layer5a(x),

self.layer5b(x),

self.layer5c(x),

self.layer5d(x),

], 1))

print('final', x.size())

return F.upsample_bilinear(final, x.size()[2:])

And here I am running it :

for epoch in range(1, num_epochs+1):

epoch_loss = []

iteration=1

for step, (images, labels) in enumerate(trainLoader):

print("Iter:"+str(iteration))

iteration=iteration+1

inputs = Variable(images)

targets = Variable(labels)

outputs = model(inputs)

optimizer.zero_grad()

print("outputs size ==> ",outputs.size())

print("targets[:, 0] size ==> ",targets[:, 0].size())

loss = criterion(outputs, targets[:, 0])

loss.backward()

optimizer.step()

epoch_loss.append(loss.data[0])

average = sum(epoch_loss) / len(epoch_loss)

print("loss: "+str(average)+" epoch: "+str(epoch)+", step: "+str(step))

The error is coming from the argument 1 of

x = self.final(torch.cat([

x,

self.layer5a(x),

self.layer5b(x),

self.layer5c(x),

self.layer5d(x),

], **1**))

Can anyone please help me to solve this problem ?

Thanks in advance.