I’m trying to implement a DCGAN, with heavy inspiration from DCGAN example from the Pytorch github and a dataset of 64x64 images, but I’ve been unable to increase the filter sizes of the convolutional layers in the discriminator and generator. The implementation works with a max filter value of 512 (e.g. Conv2d(256,512,kernel_size, stride), but I have had no luck trying to increase the filter value to a max of 1024.

The above implementation’s discriminator is as follows (which I have used in my implementation):

nc = 3

ndf = 64

self.main = nn.Sequential(

# input is (nc) x 64 x 64

nn.Conv2d(nc, ndf, 4, 2, 1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

# state size. (ndf) x 32 x 32

nn.Conv2d(ndf, ndf * 2, 4, 2, 1, bias=False),

nn.BatchNorm2d(ndf * 2),

nn.LeakyReLU(0.2, inplace=True),

# state size. (ndf*2) x 16 x 16

nn.Conv2d(ndf * 2, ndf * 4, 4, 2, 1, bias=False),

nn.BatchNorm2d(ndf * 4),

nn.LeakyReLU(0.2, inplace=True),

# state size. (ndf*4) x 8 x 8

nn.Conv2d(ndf * 4, ndf * 8, 4, 2, 1, bias=False),

nn.BatchNorm2d(ndf * 8),

nn.LeakyReLU(0.2, inplace=True),

# state size. (ndf*8) x 4 x 4

nn.Conv2d(ndf * 8, 1, 4, 1, 0, bias=False),

nn.Sigmoid()

)

I want to change the model such that the last layer is:

nn.Conv2d(ndf*16,1,4,1,0,bias=False)

so that the final layer has 1024 filters, as opposed to 512 (and similarly for the generator). However, when I implement this, changing only that and keeping the kernel size, channels (nc) etc. constant, I get

RuntimeError: Calculated input size: (2 x 2). Kernel size: (4 x 4). Kernel size can't greater than actual input size

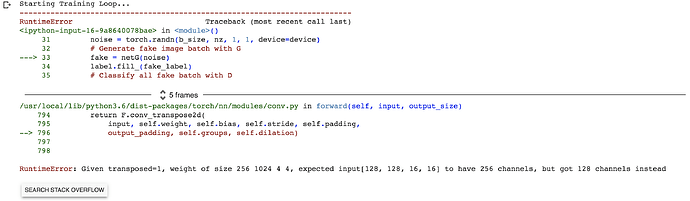

How do I construct a discriminator with a final convolutional layer that has 1024 output filters (and the equivalent convolutional transpose layer in the generator with 1024 input filters)? I imagine I should be able to do this without changing the inputs (batch of 64 images that are 64px by 64px).

Any help would be greatly appreciated.