Greetings. I have a question about the GPU memory usage in the PyTorch.

When I ran the GAT model using pytorch and torch_geometric, I countered the cuda out-of-memory problem every time.

Although I use the large knowledge graph (105.43MB) and the 9 types of datasets for the training, validation, and test, I think it is weird.

Is it normal for memory usage to increase with each epoch?

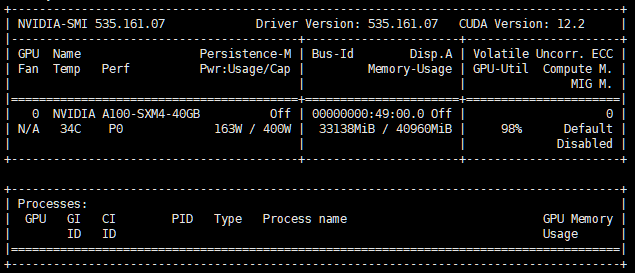

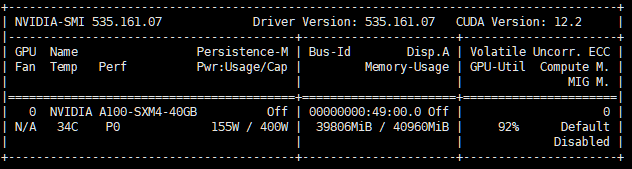

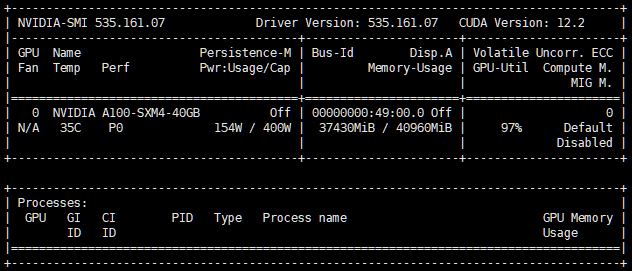

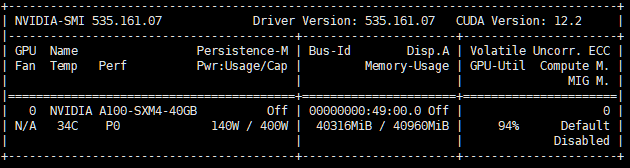

Please check the memory usage in the screenshot.

After the first epoch

After the second epoch

After the third epoch

After the fifth epoch

If it is a memory leak, what part of my code should I look at?

Thank you for reading the question.