Hi there, I implemented a simple linear regression and I’m getting some poor results. Just wondering if these results are normal or I’m making some silly mistake.

I tried different optimizers and learning rates, I always get bad/poor results

Here is my code:

import torch

import torch.nn as nn

import numpy as np

import matplotlib.pyplot as plt

from torch.autograd import Variable

class LinearRegressionPytorch(nn.Module):

def __init__(self, input_dim=1, output_dim=1):

super(LinearRegressionPytorch, self).__init__()

self.linear = nn.Linear(input_dim, output_dim)

def forward(self,x):

x = x.view(x.size(0),-1)

y = self.linear(x)

return y

input_dim=1

output_dim = 1

if torch.cuda.is_available():

model = LinearRegressionPytorch(input_dim, output_dim).cuda()

else:

model = LinearRegressionPytorch(input_dim, output_dim)

criterium = nn.MSELoss()

l_rate =0.00001

optimizer = torch.optim.SGD(model.parameters(), lr=l_rate)

#optimizer = torch.optim.Adam(model.parameters(),lr=l_rate)

epochs = 100

#create data

x = np.random.uniform(0,10,size = 100) #np.linspace(0,10,100);

y = 6*x+5

dy = 1 +5*np.random.random(y.shape)

noise = np.random.normal(0,dy)

y_noise = y+noise

plt.scatter(x,y_noise)

plt.scatter(x,y,c='red', alpha = 0.25)

plt.legend(('Noisy data','clean data'))

#pass it to pytorch

x_data = torch.from_numpy(x).float()

y_data = torch.from_numpy(y_noise).float()

if torch.cuda.is_available():

inputs = Variable(x_data).cuda()

target = Variable(y_data).cuda()

else:

inputs = Variable(x_data)

target = Variable(y_data)

for epoch in range(epochs):

#predict data

pred_y= model(inputs)

#compute loss

loss = criterium(pred_y, target)

#zero grad and optimization

optimizer.zero_grad()

loss.backward()

optimizer.step()

#if epoch % 50 == 0:

# print(f'epoch = {epoch}, loss = {loss.item()}')

#evaluation

model.eval() # evaluate mode

predict = model(inputs)

predict = predict.cpu()

predict = predict.data.numpy()

plt.scatter(x_data.numpy(), y_data.numpy())

plt.plot(x_data.numpy(), predict,'r')

plt.legend(('Noisy data','Model prediction'))

plt.show()

#print params

for name, param in model.named_parameters():

if param.requires_grad:

print(name, param.data)

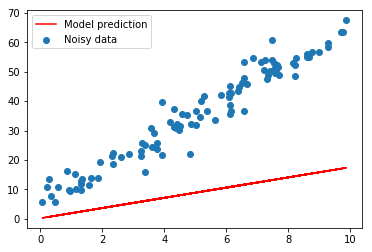

Here are the poor results visually:

linear.weight tensor([[1.7374]], device='cuda:0')

linear.bias tensor([0.1815], device='cuda:0')

The results should be weight = 6 , bias = 5