I wrote a simple program in C++ to use my PyTorch model in libtorch 1.7.1 . I have CUDA 10.2 on my OS, thus I downloaded CUDA 10.2 prebuilt version of libtorch from this link. The program works fine with CMake and CUDA. CMake can load my model on GPU and returns “Yes” when I run this simple program:

#include <torch/torch.h>

#include <torch/nn.h>

#include <torch/script.h>

#include

int main(int argc, const char* argv) {

std::cout<<"Is CUDA avalaible ? "<<((torch::cuda::is_available() == 1 )?“Yes” : "No ")<<std::endl;

torch::Tensor a = torch::ones({ 2, 2 }, torch::requires_grad());

torch::Tensor b = torch::randn({ 2, 2 });

a = a.to(torch::kCUDA);

b = b.to(torch::kCUDA);

return 0;

}

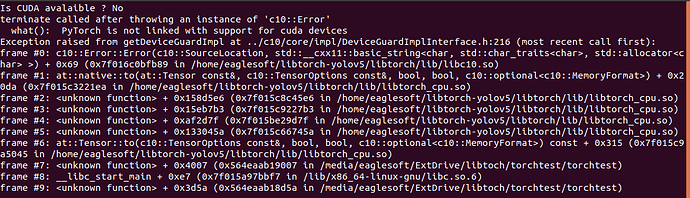

Now, I would like to use the model in my Qt Application. It gives this result when I try it :

This is my project QMake file :

QT -= gui

CONFIG += c++14 console

CONFIG -= app_bundle

DEFINES += QT_DEPRECATED_WARNINGS

SOURCES += \

main.cpp

qnx: target.path = /tmp/$${TARGET}/bin

else: unix:!android: target.path = /opt/$${TARGET}/bin

!isEmpty(target.path): INSTALLS += target

QMAKE_CXXFLAGS += -DGLIBCXX_USE_CXX11_ABI=0

CONFIG += no_keywords

QMAKE_CXXFLAGS += -Wno-unused-parameter

QMAKE_LFLAGS += -INCLUDE:?warp_size@cuda@at@@YAHXZ

INCLUDEPATH += /home/eaglesoft/libtorch/include/torch/csrc/api/include

INCLUDEPATH += /home/eaglesoft/libtorch/include

DEPENDPATH += /home/eaglesoft/libtorch/include/torch/csrc/api/include

DEPENDPATH += /home/eaglesoft/libtorch/include

LIBS += -L/home/eaglesoft/libtorch/lib -lc10 \

-lc10_cuda \

-lcaffe2_module_test_dynamic \

-lcaffe2_observers \

-ltorch_cpu \

-ltorch \

-lc10d_cuda_test \

-lcaffe2_detectron_ops_gpu \

-lcaffe2_nvrtc \

-lfmt \

-lnvrtc-builtins \

-lshm \

-ltensorpipe \

-ltorch_cuda

CUDA_DIR = /usr/local/cuda-10.2

INCLUDEPATH += $$CUDA_DIR/include

QMAKE_LIBDIR += $$CUDA_DIR/lib64

LIBS += -L/usr/local/cuda-10.2/lib64 -lcuda -lcudart -lcurand

LIBS += -L/usr/lib/x86_64-linux-gnu -lcublas

LIBS += -lstdc++fs