I am new to the Pytorch, and I am working on training image net. It seems like lots of others have this issue too. I don’t know why it cause, and how to fix.

Server:

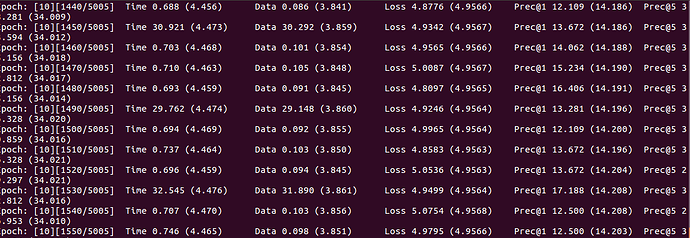

Local:

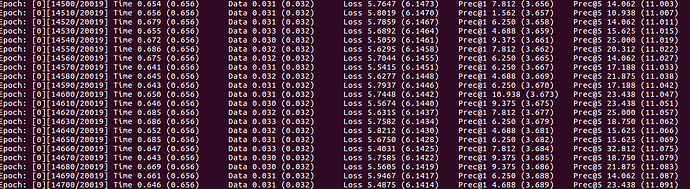

Local run on 1 GPU, server run on 4 GPUs (batch size is different, do not have enough memory on local).

You can see that server average data load time around 4 seconds. But local only have 0.03 seconds. Some time server takes 30 seconds to load data. I think because it needs to load data then sperate data to multi GPU, which is DataParallel. But why it that slow? It needs to take 22.5 days over 100 epoch to load data but only 3.5 days to train data.

The code I modify from is GitHub - jiecaoyu/XNOR-Net-PyTorch: PyTorch Implementation of XNOR-Net. (ImageNet) I did not change the load data code.

Does someone has similar issue and fixed it? Please help me.