Hi I am currently using albunet from GitHub - ternaus/robot-surgery-segmentation: Wining solution and its improvement for MICCAI 2017 Robotic Instrument Segmentation Sub-Challenge with a pretrained resnet as the encoder to train on a custom dataset. Regardless how many epochs(I tried 1-4), the predicted log_softmax value of class 0(background) is always 1 which shouldn’t be the case since that would mean it’s probability is 1. I have 4 classes including the background. The training code uses NLLLoss with log softmax.

Could you check the outputs at the beginning of the training?

Are you seeing random log probabilities, which then converge towards class0?

If so, are you dealing with an imbalanced dataset, which could explain the overfitting to the majority class.

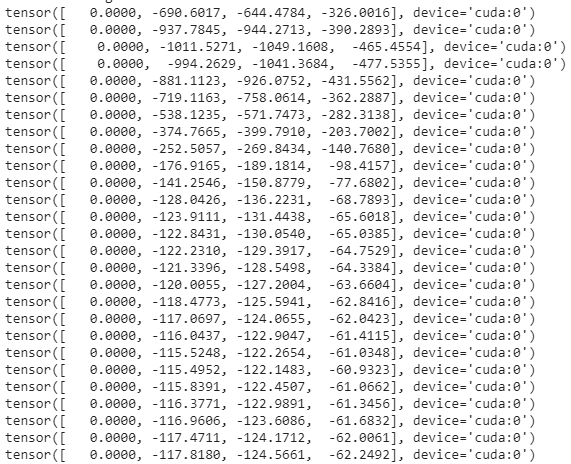

Oh it seems you are right since it converges quickly to 0 and hovers around there.

However since I am doing semantic segmentation, I am likely to have much more background than objects as most of my objects are small-medium sized, how would I be able to prevent it converge towards class 0 then?

Also another thing, since I can reobtain the probabilities by just doing torch.exp(log probs) wouldn’t that mean that the probability for class 0 be 1 and hence the other should be 0 or -inf in log probs? Or is it because it is rounded up that’s why the other can be large negative values?

Btw, appreciate your help for here and around the forums, it has helped a lot of the problems I have faced when learning pytorch.

For a segmentation use case focal loss might help or a pixel-wise weighting of the minority classes.

The large negative values are approx. zero as probabilities and I would assume an Inf somewhere in the computation might have some bad side effects.

Thanks, I’m glad my posts are helpful.

Oh okay I’ll take a look, thanks!

Yeah that’s true,t hat makes sense haha.