Hey,

I’m trying to use your LRP implementation on a pretrained model resnet34/32 (ImagetNet and CIFAR10/100 respectively).

I needed to convert the ReLU layers to inplace=False in order to make it work and then when I execute this - it works but the output is weird and inconsistent…

these are the images for example:

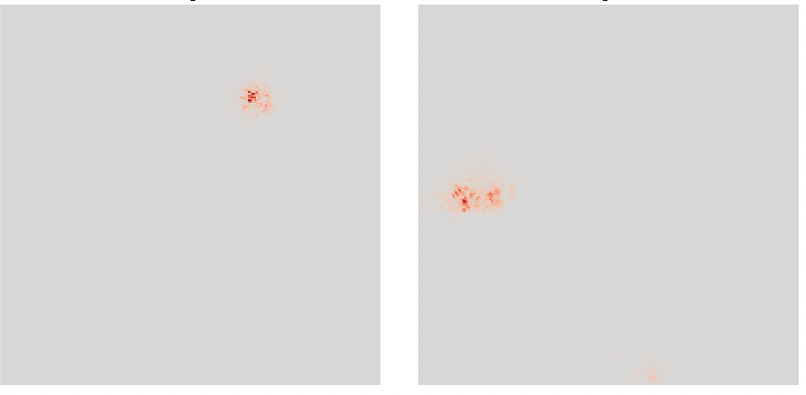

and these are the explanations (heat maps)

which doesnt make sense since the LRP is always readable and coherent with the images themselves…

thanks!