Hi, thank you for answering my question! I am new in this field, and I was tried to calculate the LSTM with excel, but I didn’t think about make it manual with python. So, I tried the code that you have referred to, and it works on my train data when the batch_first = False, it produces the same output for Official LSTM and Manual LSTM. However, when I change the batch_first = True, it not produces the same value anymore, while I need to change the batch_first to True, because my dataset shape is tensor of (Batch, Sequences, Input size). Which part of the Manual LSTM needs to be changed to produces the same output as the Official LSTM produces when batch_first = True? Here is the code snippet:

import numpy as np

import torch

import torch.nn as nn

import torch.nn.functional as F

train_x = torch.tensor([[[0.14285755], [0], [0.04761982], [0.04761982], [0.04761982],

[0.04761982], [0.04761982], [0.09523869], [0.09523869], [0.09523869],

[0.09523869], [0.09523869], [0.04761982], [0.04761982], [0.04761982],

[0.04761982], [0.09523869], [0. ], [0. ], [0. ],

[0. ], [0.09523869], [0.09523869], [0.09523869], [0.09523869],

[0.09523869], [0.09523869], [0.09523869],[0.14285755], [0.14285755]]],

requires_grad=True)

seed = 23

torch.manual_seed(seed)

np.random.seed(seed)

pytorch_lstm = torch.nn.LSTM(1, 1, bidirectional=False, num_layers=1, batch_first=True)

weights = torch.randn(pytorch_lstm .weight_ih_l0.shape,dtype = torch.float)

pytorch_lstm.weight_ih_l0 = torch.nn.Parameter(weights)

# Set bias to Zero

pytorch_lstm.bias_ih_l0 = torch.nn.Parameter(torch.zeros(pytorch_lstm.bias_ih_l0.shape))

pytorch_lstm.weight_hh_l0 = torch.nn.Parameter(torch.ones(pytorch_lstm.weight_hh_l0.shape))

# Set bias to Zero

pytorch_lstm.bias_hh_l0 = torch.nn.Parameter(torch.zeros(pytorch_lstm.bias_ih_l0.shape))

pytorch_lstm_out = pytorch_lstm(train_x)

batchsize=1

# Manual Calculation

W_ii, W_if, W_ig, W_io = pytorch_lstm.weight_ih_l0.split(1, dim=0)

b_ii, b_if, b_ig, b_io = pytorch_lstm.bias_ih_l0.split(1, dim=0)

W_hi, W_hf, W_hg, W_ho = pytorch_lstm.weight_hh_l0.split(1, dim=0)

b_hi, b_hf, b_hg, b_ho = pytorch_lstm.bias_hh_l0.split(1, dim=0)

prev_h = torch.zeros((batchsize,1))

prev_c = torch.zeros((batchsize,1))

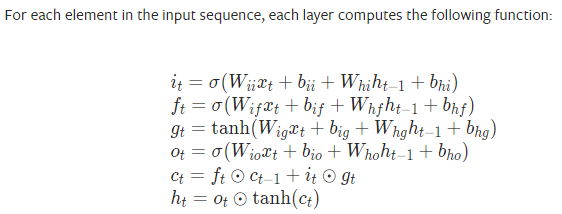

i_t = torch.sigmoid(F.linear(train_x, W_ii, b_ii) + F.linear(prev_h, W_hi, b_hi))

f_t = torch.sigmoid(F.linear(train_x, W_if, b_if) + F.linear(prev_h, W_hf, b_hf))

g_t = torch.tanh(F.linear(train_x, W_ig, b_ig) + F.linear(prev_h, W_hg, b_hg))

o_t = torch.sigmoid(F.linear(train_x, W_io, b_io) + F.linear(prev_h, W_ho, b_ho))

c_t = f_t * prev_c + i_t * g_t

h_t = o_t * torch.tanh(c_t)

print('nn.LSTM output {}, manual output {}'.format(pytorch_lstm_out[0], h_t))

print('nn.LSTM hidden {}, manual hidden {}'.format(pytorch_lstm_out[1][0], h_t))

print('nn.LSTM state {}, manual state {}'.format(pytorch_lstm_out[1][1], c_t))