Hi every one,

can I help me please?

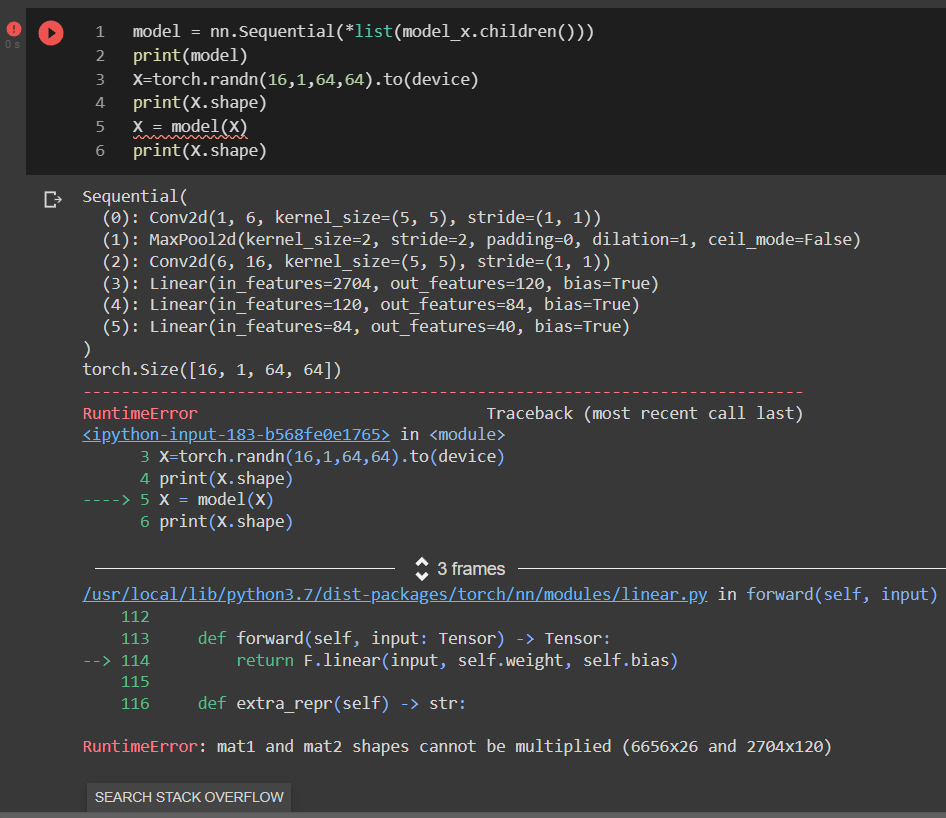

Your first linear layers expects an input feature size of 2704, while your input has a shape of 6656x26. You can change your model’s 3rd layer’s input feature size to 26, and it should work.

hi Mohammed,

but why when i use this “model_x” bellow, it’s work !!!

because the upper “model” is the same with “model_x”

why “model_x”-down work and don’t for “model”-up !!!

than’k alot

Hi again,

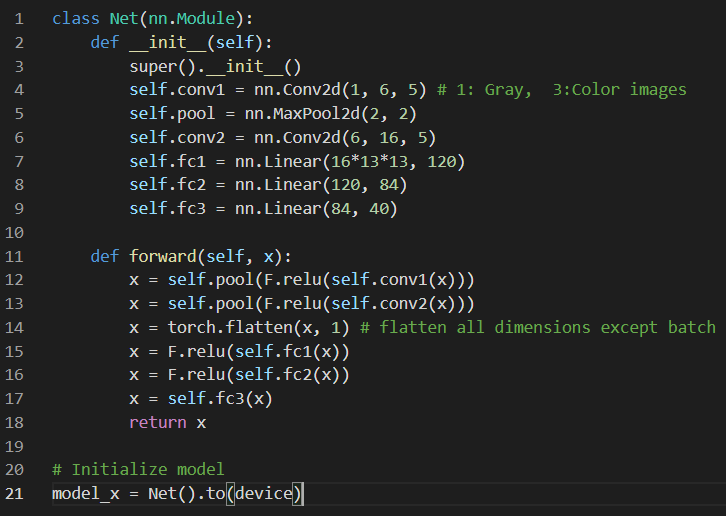

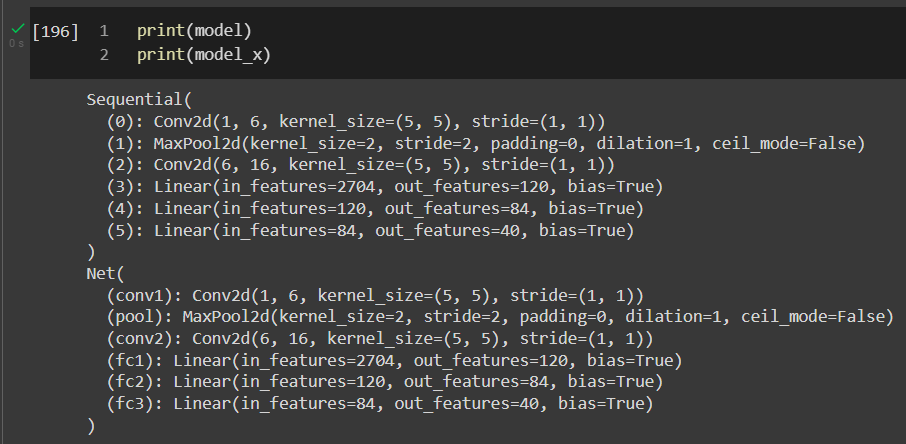

This is the architecture for the two models:

model : not work

model_x : work for the same “x” entry [16,1,64,64]

The difference lies between the forward call of the two models, if you look at the forward call of model_x, it has a flatten layer which flattens the convolution output to have a shape (N, 2704) where N is your batch size and 2704 is the output from the convolution layers. The forward call in model does not have the flatten layer, since we only copy the layers from model_x while creating model. So the output in that case won’t be flattened out, and hence you get an issue with sizes of matrices. I hope it makes things more clear.

thank you, and that make sense, but:

1: How can copy the layers from model_x to create new model without issues?

2: I want just delete some layer from the model_x.

thank’s again

This answer here discusses in detail about how to create your own custom forward call using a pre-defined model.

This post here discusses about removing intermediate layers from a model.

Hope these are helpful.

I want to thank you very much!

via your posts that you’re include in the last reponse, i found this beautiful post:

have a nice day