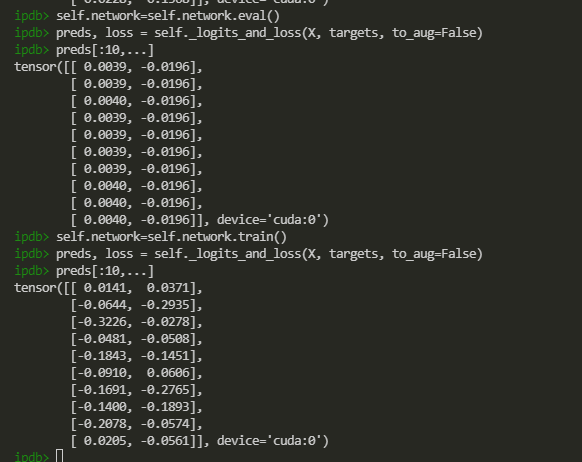

self._logits_and_loss just calls self.network(X) function.

We can see that given the same input data X, eval mode will return nearly the same results for all data. But if I change to train mode, the results seem relatively correct.

My model is based on my own framework and it is kind of complicated, so I cannot give the code of the model here.

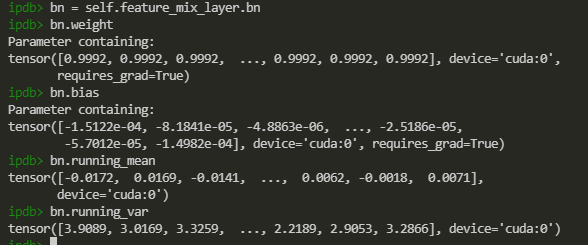

I guess there is something wrong with the BN layer, so I tried to print the data of the last BN layer after training several epochs

From the above figure, it seems that everything is OK with the last BN layer. I wonder what are the possible reasons? Are any other suggestions to debug this problem? Thanks!