I have been training resnet 18 as well as resnet50 model on my dataset(which contains about 1500 samples).

When it comes to predicting output labels of dimension 73x4 and 1x199,the models tend to do well,but for output vectors of dimension 1x6 or for classification purposes,I have noticed that there is huge overfitting.

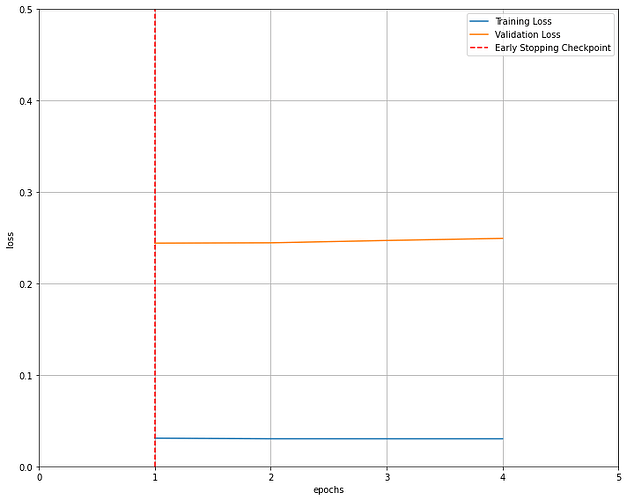

With training loss being 0.03 and validation loss being 0.24

What steps can I take to avoid overfitting.

Should I apply data augmentation on my images,or should I go for a different pretrained model like efficientnet/vgg.

Note I tried using vgg16 ,but no effect in performance was seen.

Enclosed is the loss curve

I assume you have trained longer than 4 epochs, because this loss curve does not look like overfitting to me. Was the curve identical for both ResNets? If yes, maybe the problem is somewhere else.

Some good examples of curves here: How to use Learning Curves to Diagnose Machine Learning Model Performance

If you are 100% certain its not a different issue I would attempt more augmentation. It could also be an issue how your dataset train/val/test splits are selected. Maybe the test split is unrepresentative compared to the train/val splits.

Yes for both resnet 18 and 50 the curve was the same.

I looked into my training data,apparently there is a class imbalance,with 600 samples belonging to class 1 and 400 belonging to class 2.Does that impact the learning process,also I am not sure why the training curve is coming out to be flat.

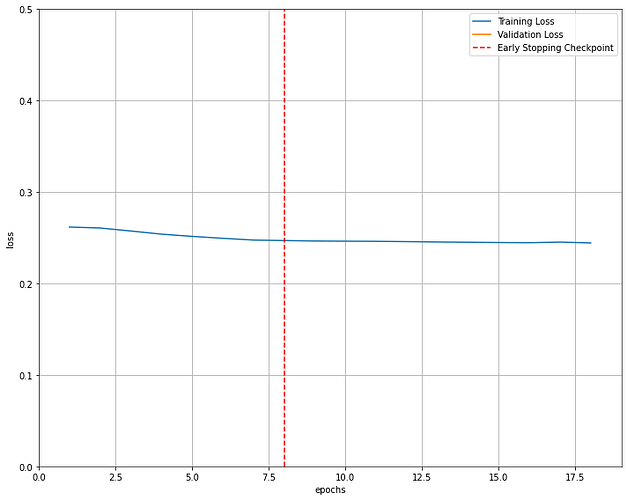

Here is another image corresponding to training on another model with dropout and weight decay and early stopping.

the final result after training for 17 epochs

[17/50] train_loss: 0.24435 valid_loss: 1.98771

Furthermore,it seems that my dataset doesn’t have any variations in it.