Hi I’m confused, I’m using pytorch for my project but my Model is not learning well. the loss start with a very high value (40000), then after the first 5-10 epochs the loss decrease rapidly to 6000-7000 and then it stuck there, no matter what I make. I tried even to change to skorch instead of pytorch so that I can use cross validation functionality but that also didn’t help. I tried different optimizers and added layers and neurons to the network but that didn’t help, it stuck at 6000 which is a very high loss value. I’m doing regression here, I have 10 features and I’m trying to predict one continuous value. that should be easy to do that’s why it is confusing me more.

here is my network:

class BearingNetwork(nn.Module):

def init(self, n_features=X.shape[1], n_out=1):

super().init()

self.model = nn.Sequential(nn.Linear(n_features, 512), nn.BatchNorm1d(512), nn.LeakyReLU(), nn.Linear(512, 64), nn.BatchNorm1d(64), nn.LeakyReLU(), nn.Linear(64, n_out), )

and here are my settings:

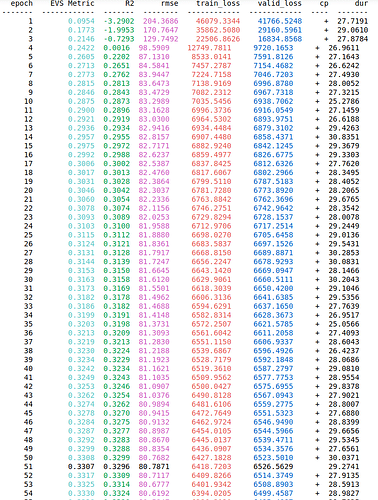

R2 = EpochScoring(r2_score, lower_is_better=False, name=‘R2’)

explained_var_score = EpochScoring(EVS, lower_is_better=False, name=‘EVS Metric’)

custom_score = make_scorer(RMSE) # making root mean squared error as a custom metric

rmse = EpochScoring(custom_score, lower_is_better=True, name=‘rmse’)bearing_nn = NeuralNetRegressor(

BearingNetwork, criterion=nn.MSELoss, optimizer=optim.Adam, optimizer__amsgrad=True, # I also tried Adam without the amsgrad max_epochs=5000, batch_size=128, lr=0.001, train_split=skorch.dataset.CVSplit(10), callbacks=[R2, explained_var_score, rmse, Checkpoint(), EarlyStopping(patience=100)], device=device)

I also standardize the Input values. my Input have the shape:

torch.Size([39006, 10])

and shape of output is:

torch.Size([39006, 1])

I’m using 128 as my Batch_size but I also tried other values like 32, 64, 512 and even 1024. Although normalizing output is not necessary but I also tried that and It didn’t work when I predict values, the loss is high. Please someone help me on this, I would appreciate every helpful advice