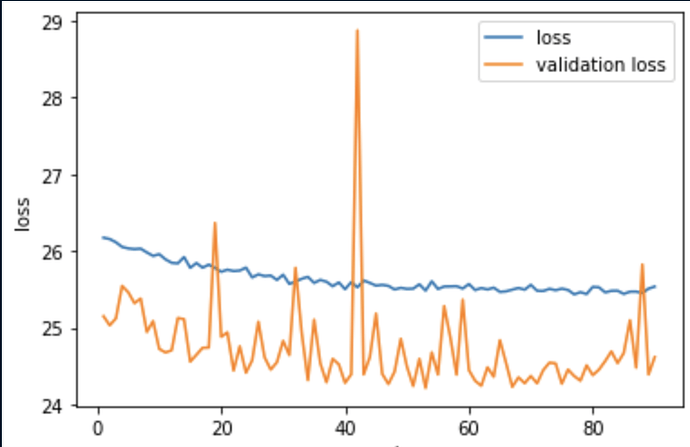

While training on a tabular dataset, I am experiencing fluctuations in the validation loss value that I record in each epoch. Is this situation normal?

batch_size = 140

epochs = 90

learning_rate = 0.001

n_inputs = X_train.shape[1]

NN = Sequential(nn.Linear(n_inputs, 10),

nn.ReLU(),

nn.Linear(10, 5),

nn.ReLU(),

nn.Linear(5,1),

# nn.Sigmoid()

)

NN.to(device) # We will move the model to GPU, if its available

criterion = torch.nn.MSELoss()

optimizer = SGD(NN.parameters(), lr=learning_rate)

train_loss = []

val_loss = []

val_accuracy = []

c=0

loss = nn.MSELoss()

for epoch in range(epochs):

c=c+1

train_loss_batch = []

val_loss_batch = []

for i, (inputs, targets) in enumerate(train_dl): # enumerate mini batches

inputs, targets = inputs.to(device), targets.to(device) # move the data to GPU if available

optimizer.zero_grad() # clear the gradients

yhat = NN(inputs.float()) # compute the model output

loss = criterion(yhat, targets.float()) # calculate loss

train_loss_batch.append(loss.cpu().detach().numpy()) # store the loss back to cpu and credit assignment

loss.backward() # update model weights

optimizer.step()

train_loss.append(sum(train_loss_batch)/i)

#### EVALUATION ON VALIDATION SET ###################### if you are not using validation set, just skip the next lines

for (inputs, targets) in val_dl:

inputs, targets = inputs.to(device), targets.to(device) # move the data to GPU if available

yhat = NN(inputs.float())

loss = criterion(yhat, targets.float()) #calculate loss

val_loss.append(loss.cpu().detach().numpy()) # store the loss back to cpu and credit assignment

actual = targets.cpu().numpy() #to calculate the validation accuracy over an epoch

actual = actual.reshape((len(actual), 1))

yhat = yhat.cpu().detach().numpy()

yhat = yhat.round() # round to class values

print(c)

print("################### Training finished ##########################")