Hi. I’m an undergraduate studying plasma, trying to apply deep learning to my research. I don’t have local ML support, so I had to study it all by myself and get some help from AI tools. I ’m seeking practical advice from PyTorch experts. I’ll not going to explain all the details about physics and focus on the ML/code side.

First of all, I’m not good at English so sorry if my sentences might sound awkward or rude. I got some help from AI tools either. I’m not sure if my topic’s format is appropriate, I’ll modify anytime if so.

I really want to appreciate first for spending your time.

Problem

-

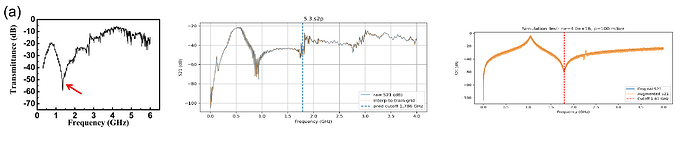

Input: a 1D spectrum (frequency vs. response) that typically has an N-shaped curve with a single clear minimum.

-

Goal: regress the frequency xxx at that minimum (scalar output).

-

Motivation: classic fitting or “find local minimum” rules can fail when the curve flattens under some conditions or is noisy.

-

Approach (simulation → real)

-

I can generate synthetic spectra from a simple circuit model, then add perturbations (noise, drift, local bumps) to mimic measurement conditions.

-

Model & inputs: small 1D CNN with 3 input channels:

[frequency axis, S21 in dB, first derivative dS/df].- Derivative is computed with Savitzky–Golay (fixed window/polyorder) after resampling onto a fixed training grid.

-

Training: synthetic data only (large set). Loss = SmoothL1, optimizer = Adam, scheduler = ReduceLROnPlateau.

-

Inference: real signal traces are parsed, resampled to the same grid, derivative computed the same way, then passed to the model.

-

first and second Figures below are How the Actual experiment Data looks like and the Third one is how synthetic data looks like.

What I observe

-

On the synthetic validation set the model is reasonable, but on real data the prediction is consistently biased in one direction (systematic offset).

-

Preprocessing (grid + derivative method) is kept the same between train and inference.

What I’d like feedback on

-

Sim-to-real feasibility: Is it realistic to train only on synthetic spectra and generalize to real traces? If so, what are the key practices (preprocessing, normalization, augmentations, loss/scheduler choices) that usually make this work?

-

Non-DL or hybrid options: If deep learning isn’t strictly necessary, are there robust alternatives or hybrids you’ve found effective for predicting the minimum’s x-location when curves can be flat/noisy?

-

Mixing real data: If I can label a small set of real traces, how would you mix them with synthetic data? (e.g., pretrain on synthetic → fine-tune only the head/BN on real; or a fixed ratio like 90:10 or 80:20?) Any rules of thumb?

-

Model/code review: With inputs

[freq, S21, dS/df]and a small 1D CNN (Conv-BN-ReLU-Pool blocks → GAP → FC), SmoothL1+Adam+Plateau scheduler:-

Any obvious anti-patterns or easy wins (e.g., channel normalization, scheduler choice like cosine restarts/one-cycle, initialization details for ReLU, loss reweighting near the minimum, handling boundary effects after resampling, etc.)?

-

Suggestions to reduce systematic bias on real data are especially welcome.

-

Extra details (compact)

-

Data: synthetic ~100k traces on a fixed grid; real .s2p have irregular grids → resampled to training grid.

-

Augmentations: additive noise (pressure-dependent scale), slow drift, small local bumps; all applied after generating the clean model curve, then the derivative is computed.

-

Environment: Colab, PyTorch.

I can share my code if it’s needed. Thanks in advance for any pointers!