I wanted to have CategoricalCrossentropyLoss as performed in TensorFlow but while

I’ve read answers from discuss but it’s I am not getting clearly what to do

here is what I tried

my outputs =

output_truth = np.ones((1,1024,15))

if we tried to find loss for above shape using

tf.keras.losses.CategoricalCrossentropy()(output_from_model, output_truth)

# we get answer with no error

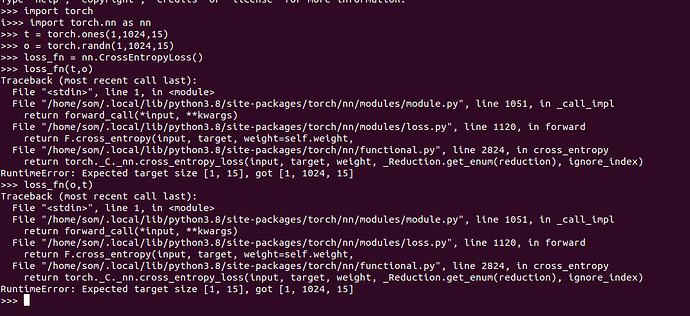

but if we same tried with pytorch

torch.nn.CrossEntropyLoss()(torch.tensor(output_from_model) torch.tensor(output_truth))

we get following error

---------------------------------------------------------------------------

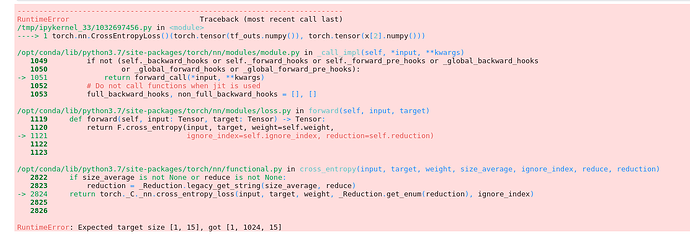

RuntimeError Traceback (most recent call last)

/tmp/ipykernel_33/1032697456.py in <module>

----> 1 torch.nn.CrossEntropyLoss()(torch.tensor(tf_outs.numpy()), torch.tensor(x[2].numpy()))

/opt/conda/lib/python3.7/site-packages/torch/nn/modules/module.py in _call_impl(self, *input, **kwargs)

1049 if not (self._backward_hooks or self._forward_hooks or self._forward_pre_hooks or _global_backward_hooks

1050 or _global_forward_hooks or _global_forward_pre_hooks):

-> 1051 return forward_call(*input, **kwargs)

1052 # Do not call functions when jit is used

1053 full_backward_hooks, non_full_backward_hooks = [], []

/opt/conda/lib/python3.7/site-packages/torch/nn/modules/loss.py in forward(self, input, target)

1119 def forward(self, input: Tensor, target: Tensor) -> Tensor:

1120 return F.cross_entropy(input, target, weight=self.weight,

-> 1121 ignore_index=self.ignore_index, reduction=self.reduction)

1122

1123

/opt/conda/lib/python3.7/site-packages/torch/nn/functional.py in cross_entropy(input, target, weight, size_average, ignore_index, reduce, reduction)

2822 if size_average is not None or reduce is not None:

2823 reduction = _Reduction.legacy_get_string(size_average, reduce)

-> 2824 return torch._C._nn.cross_entropy_loss(input, target, weight, _Reduction.get_enum(reduction), ignore_index)

2825

2826

RuntimeError: Expected target size [1, 15], got [1, 1024, 15]