Hello,

I am trying to get outputs from a GRU with:

input = embedded sequence of dimensions [146, 1, 256]

h_0 = hidden state of dimension [1, 1, 128]

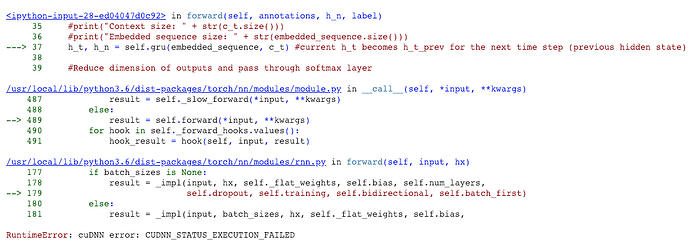

I have worked with PyTorch GRUs before, but all of a sudden with these inputs I am getting the error cuDNN error: CUDNN_STATUS_EXECUTION_FAILED, even though the dimensions seem to match the requirements in the nn documentation, and all elements are cast to my ‘cuda’ device.

I have also checked my RAM usage and have plenty, so am confused.

SOLVED:

When using an embedding layer, num_embeddings parameter should be the size of your vocabulary +1. Should you accidentally provide an index to the embedding layer outside of this range-1 (e.g. your num_embeddings is set to 111 and you provide 111 as your last index rather than 110), you will be met with an unhelpful CUDA error as shown above.

A helpful ‘index provided out of bounds’ error from the nn.Embedding layer would be nice if possible.

Good to hear you’ve found the issue!

Usually I would recommend to run your code on CPU and check if it fails, since the error messages are usually clearer than the CUDA ones.

Also, if you are running into an error using the GPU (while CPU works fine), you should launch your script using CUDA_LAUNCH_BLOCKING=1 python script.py args. This will synchronize your CUDA calls, such that the error should be thrown with the corresponding line of code. This cannor be ensured for the default behavior, since CUDA calls are asynchronous.

1 Like

Awesome, thanks very much. I will bear this in mind in future.