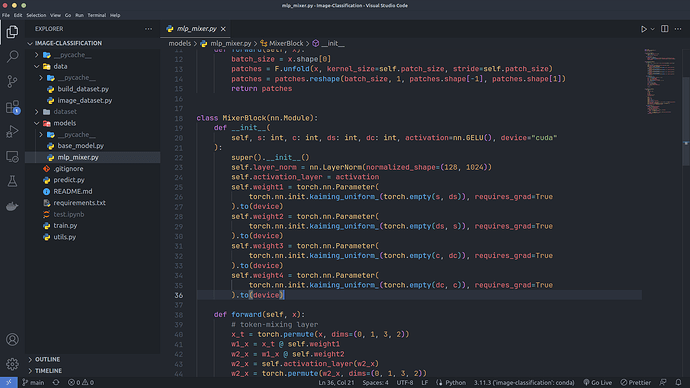

I have defined 4 weight matrices as you can see in the below image, but my Model which is subclass of nn.Module didn’t find out that these matrices are its parameters. Can anyone help me to resolve this problem

The .to() call is a differentiable operation and will create non-leaf tensors which won’t be registered in the parent module.

Call model.to(device) instead or call .to() on the internal tensor instead of the nn.Parameter.

PS: it’s better to post code snippets by wrapping them into three backticks ``` instead of screenshots.

i have fixed my code using your solution but it still not works, can you give me another solution

Could you post a minimal and executable code snippet reproducing the issue, please?

This is my code for MLPMixer class:

class MlpMixer(nn.Module):

def __init__(

self,

patch_size: int,

s: int,

c: int,

ds: int,

dc: int,

num_mlp_blocks: int,

num_classes: int,

):

self.c = c

self.s = s

self.ds = ds

self.dc = dc

super().__init__()

self.num_classes = num_classes

super().__init__()

self.mixer_blocks = [MixerBlock(s, c, ds, dc) for i in range(num_mlp_blocks)]

self.num_classes = num_classes

self.classifier = nn.Sequential(nn.Flatten(), nn.Dropout(0.2))

self.patches_extract = Patches(patch_size)

def forward(self, x):

patches = self.patches_extract(x)

for block in self.mixer_blocks:

patches = block(patches)

output = self.classifier(patches)

if self.num_classes == 2:

output = nn.Linear(self.c * self.s, 1)(output)

output = nn.Sigmoid()(output)

else:

output = nn.Linear(self.c * self.s, self.num_classes)(output)

output = nn.Softmax()(output)

return output

This is my code for testing

input_tensor = torch.rand(16, 3, 224, 224)

model = MlpMixer(16, 196, 768, 2048, 512, 8, 2)

for param in model.parameters():

print(param)

and the result is nothing happens

Your code is not executable so I cannot confirm what exactly is broken, but self.mixer_blocks should not be a plain Python list but an nn.ModuleList object to properly register the submodules.

it works, thank you so much