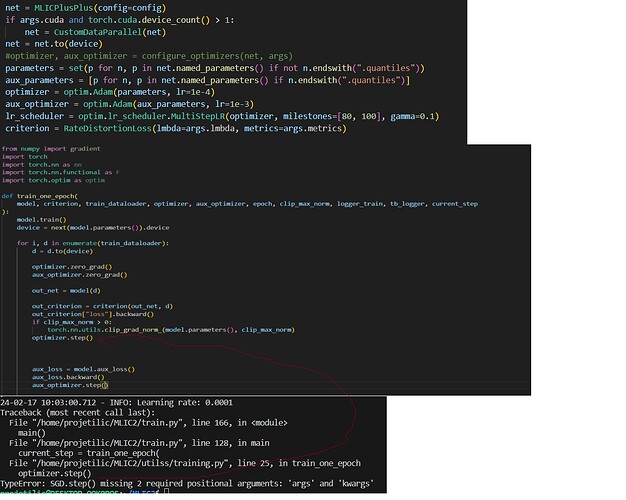

I’m currently working on a solution via PyTorch. I’m not going to share the exact solution but I will provide code that reproduces the issue I’m having.

I have a model defined as follows:

class Net(nn.Module):

def __init__(self):

super(Net,self).__init__()

self.fc1 = nn.Linear(10,4)

def foward(self,x):

return nn.functional.relu(self.fc1(x))

Then I create a instance: my_model = Net(). Next I create an Adam optimizer as such:

optim = Adam(my_model.parameters())

# create a random input

inputs = torch.tensor(np.array([1,1,1,1,1,2,2,2,2,2]),dtype=torch.float32,requires_grad=True)

# get the outputs

outputs = my_model(inputs)

# compute gradients / backprop via

outputs.backward(gradient=torch.tensor([1.,1.,1.,5.]))

# store parameters before optimizer step

before_step = list(my_model.parameters())[0].detach().numpy()

# update parameters via

optim.step()

# collect parameters again

after_step = list(my_model.parameters())[0].detach().numpy()

# Print if parameters are the same or not

print(np.array_equal(before_step,after_step)) # Prints True

I provided my models parameters to the Adam optimizer, so I’m not exactly sure why the parameters aren’t updating. I know in most cases one uses a loss function, however I cannot do that in my case but I assumed if I specified model paramters to the optimizers, it would know to connect the two.

Anyone know why the parameters aren’t getting updated?