Hello there, how are you doing?

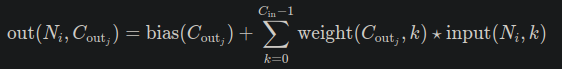

I’m studying convolutional networks, and to check my understanding I compared the output of a single convolutional layer with the result produced by the function “correlated2d” from the scipy library. Both results are the same, however, the Pytorch documentation describes the output of a 2D convolution as

so, because of the order of the cross-correlation operator described above, shouldn’t the result be a flipped version of what I got?

My code:

import numpy as np

import torch

import torch.nn as nn

import scipy

kernel = torch.from_numpy(

np.array(

[[[[ 1, 0, -1],

[ 0, 0, 0],

[-2, 0, 1]]]]

)

).float()

input_data = torch.from_numpy(

np.array(

[[[1, 2, 3, 4, 5],

[6, 7, 8, 9, 1],

[2, 3, 4, 5, 6],

[7, 8, 9, 1, 2],

[3, 4, 5, 6, 7]]]

)

).float()

conv2d = nn.Conv2d(

in_channels=1,

out_channels=1,

kernel_size=3,

padding=0,

stride=1,

padding_mode="zeros",

bias=False,

)

conv2d.weight = nn.Parameter(kernel)

print(conv2d(input_data))

print(scipy.signal.correlate2d(

input_data.squeeze(0),

kernel.squeeze(0).squeeze(0),

mode="valid"

))

Results:

tensor([[[ -2., -3., -4.],

[ -7., -17., -9.],

[ -3., -4., -5.]]], grad_fn=<SqueezeBackward1>)

[[ -2. -3. -4.]

[ -7. -17. -9.]

[ -3. -4. -5.]]

Expected result:

print(scipy.signal.correlate2d(

kernel.squeeze(0).squeeze(0),

input_data.squeeze(0),

mode="valid"

))

array([[ -5., -4., -3.],

[ -9., -17., -7.],

[ -4., -3., -2.]], dtype=float32)